Sustainable.ai, Parallelformers, Model Optimization

Some Tricks for Large Scale Distributed Training

An excellent thread about Transformers:

If you are into recommender systems, you should read this thread as well:

Leave some comments if you like Twitter threads that I have been sharing for the last couple of newsletters!

Articles

Gradient published a post for sustainable ai. The post points out obvious issues in larger models in terms of memory and compute and it suggests the following routes to reduce and increase the visibility of the problem:

Instrument the system that you can collect signals around compute and memory consumption

This would increase visibility of the metric in the system and it will be part on how we evaluate the system

Elevate Smaller Models

Model Optimization Techniques(quantization, pruning, distillation) can be applied to make the models to be smaller in both memory and compute.

Alternate Deployment Strategies

Use specialized hardware optimized for deep learning flows. Some of the computation can be done in specialized ASICs or TPUs that are well optimized for certain operations which would be more efficient comparing to traditional CPUs.

Amazon published a post to solve last mile delivery problem of location of where to deliver packages. They are adapting learning-to-rank for geospatial data out of many possible candidates.

Learning to rank is a way to learn from pairwise preference data in information retrieval. If a search engine presents a ranked list of results, clicking only the third search result implicitly provides two pairwise preferences: the user prefers the third search result to the first result, and the user also prefers the third result to the second result. That provides two labeled preference pairs that can help train a ranking model to improve future search results for other queries.

Similar to information retrieval, if user prefers one location over another locations, then they can train a model to precisely choose one location over other possible locations.

Tensorflow has blog post around model optimization techniques by ARM team.

What is interesting in this post is to apply multiple techniques in a single shot in order to optimize the sequence and methods that can be applied in order to get even further efficiency.

Google wrote a blog post on federated learning and open-sourced FedJax library which allows engineers and researchers to write their federated learning algorithms/simulations in Jax.

Tunib wrote a blog post where they explain various different options on how they accomplished model parallelization. They have built Parallelformers which is a library that works on a number of HuggingFace models that can parallelize model in GPU. A very simple tutorial is outlined here.

TLDR:

They have built model parallelization on GPU through Tensor replacement and lazy tensor initiation.

They have built processes that you can deploy the models to web servers.

Spotify published a blog post which announces their new library Pedalboard. The library enabled audio effects into audio through Python in a programmatic way.

Lilian Weng wrote a blog post on how to train large neural networks. It is all about parallelizing different aspects of distribution and performance optimization and model optimization.

Data Parallelism: How to ingest data in a distributed manner

Model Parallelism: How to distribute model parts in a distributed manner so that you can parallelize the computation across different parts

Pipeline Parallelism: How to combine data/model parallelism in such a way that resulting combined pipeline becomes more efficient.

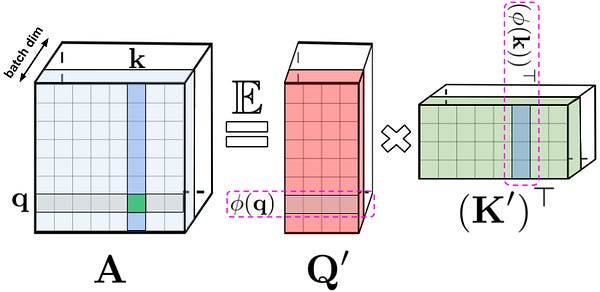

Tensor Parallelism: How to parallelize tensor operations so that applying these operations become more efficient.

Mixture-of-Experts (MoE): Idea is to train a number of weak learner to ensemble them to produce a strong learner. The idea is that you can train the weak learners much faster and in a completely parallel manner and then combine their outputs to get a final result.

Other Memory Saving Designs: A combination of performance and model optimization techniques

Libraries

AutoTabular is a library for building AutoML pipelines for tabular data. It is backed by Rapidas library that allows tight integration with GPUs and you can choose one of the deep learning framework(PyTorch or Tensorflow) for the deep learning framework.

OpenPrompt is a library that uses pre-trained language models, to modify the input text with textual template(prompt). It has 2 different main concepts which are:

Templates: this wraps the input with textual or soft-encoding sequence.

Verbalizer: this projects the original labels to a set of label words.

Poeem is a library for efficient approximate nearest neighbor (ANN) search that is similar to FAISS. However, the difference is that Poeem actually learn jointly the embedding index together with the retrieval model in order to avoid quantization distortion.

d3m is a project from NYU, not a library, but want to highlight nonetheless in this section as it has many libraries that compose this project to tackle a highly ambitious project of AutoML which aims to create end to end ML pipeline.

AlphaD3M is an AutoML system that automatically searches for models and derives end-to-end pipelines that read, pre-process the data, and train the model. AlphaD3M leverages recent advances in deep reinforcement learning and is able to adapt to different application domains and problems through incremental learning.

D3M-interface: Data scientists can interact with AlphaD3M through d3m-interface. d3m-interface is a Python library which enables data scientist to use D3M AutoML systems. It contains an implementation to integrate D3M AutoML systems with Jupyter Notebooks. It provides a familiar interface to make easier for people to adopt D3M tools.

PipelineProfiler is an interactive visualization tool that allows the exploration and comparison of the solution space of ML pipelines produced by AutoML systems. PipelineProfiler is integrated with Jupyter Notebook and can be used together with common data science tools to enable a rich set of analyses of the ML pipelines.

Visus is a system designed to support the model building process and curation of ML data processing pipelines generated by AutoML systems. Visus also integrates visual analytics techniques and allows users to perform interactive data augmentation and visual model selection.

Videos

Explainable Vision is a workshop that has a series of lectures that talk about explainability in computer vision. Some of the videos also go into detail about bias and how to build “trustworthy models” like this one.