Articles

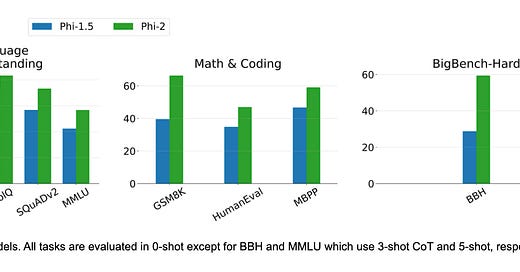

Microsoft Research introduces Phi-2, a surprisingly powerful small language model (SLM) with only 2.7 billion parameters. Despite its size, Phi-2 surpasses much larger models on various benchmarks, highlighting the potential of SLMs.

Key Differences:

Superior Performance: Phi-2 outperforms larger models (7B and 13B parameters) on language reasoning and coding tasks, even without alignment or fine-tuning.

Efficiency: Its smaller size requires less computational resources, making it more accessible and practical for diverse applications.

Customizability: SLMs like Phi-2 are easier to fine-tune for specific domains, potentially leading to higher performance and accuracy.

Training Details: Trained on 1.4T tokens of synthetic and web datasets, leveraging next-word prediction and a 2048 context length.

Responsible Development: Phi-2 exhibits better behavior regarding toxicity and bias compared to some larger,open-source models.

Enables the following areas:

Shifting Paradigm: Phi-2 challenges the notion that bigger language models are always better, showcasing the potential of efficient and focused SLMs.

Increased Democratization: Smaller models like Phi-2 reduce barriers to entry, allowing more developers and researchers to explore the power of large language models.

Domain-Specific Applications: Tailoring SLMs to specific domains could lead to significant advancements in various fields.

Responsible AI Development: Phi-2 highlights the importance of considering responsible development practices when building large language models.

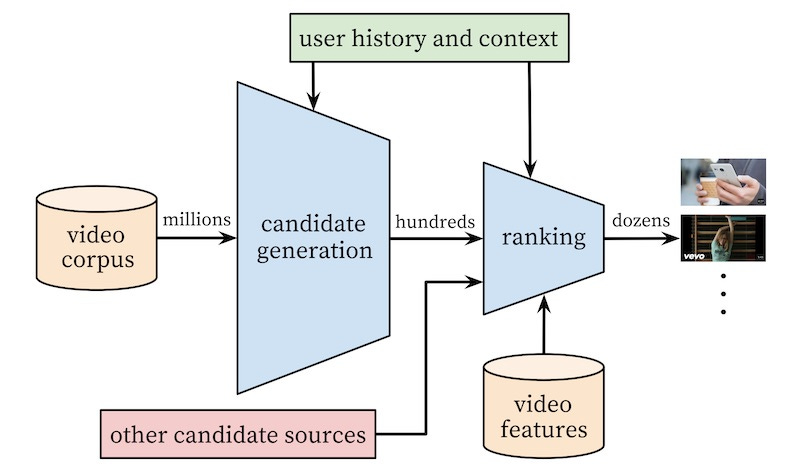

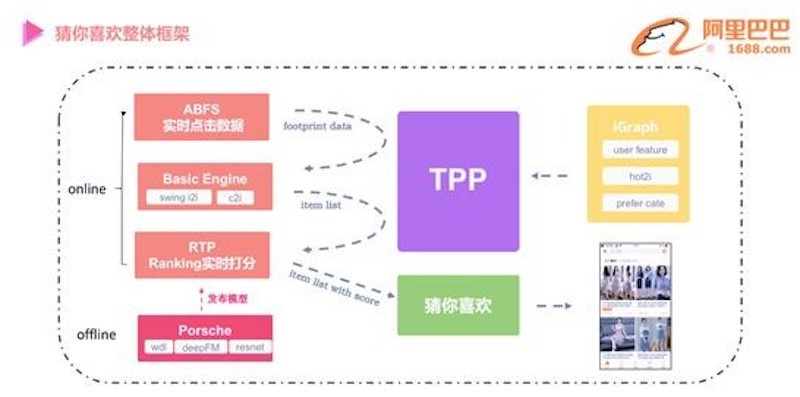

For recommender system architects and engineers, static batch-based recommendations feel increasingly archaic. Frozen snapshots of historical preferences, they fail to capture the real-time nuances of user behavior. Enter real-time recommendations, powered by machine learning and poised to revolutionize user engagement. Eugene Yan wrote a comprehensive article on the subject from mainly systems/components point of view.

We've all encountered the frustration of browsing an e-commerce store bombarded with generic product suggestions or newsfeeds devoid of context. These limitations stem from traditional, batch-based recommendations, relying on historical data that might not reflect a user's current intent or evolving preferences. With real-time recommendations, these issues are prevented and it enables the following capabilities:

Microsecond-Level Personalization: Imagine recommendations that morph with every click, scroll, or search query. Think of a complex recommendation system analyzing a user's viewing history, current search for "best sci-fi movies," and real-time streaming data on popular titles, then suggesting a space opera series with near-instantaneous accuracy. This dynamic adaptation fosters a sense of serendipity and relevance, keeping users engaged and coming back for more. Consider these technologies:

RecSys frameworks: Frameworks like Apache Spark MLib and TensorFlow Recommenders provide pre-built components and optimization techniques for scalable real-time recommender systems.

Streaming data platforms: Apache Kafka and Apache Flink enable real-time ingestion and processing of user actions, clickstream data, and product catalogs, feeding fresh data to the models.

Engagement Amplification: Picture newsfeeds that update with articles relevant to your just-read piece, or music streaming platforms suggesting songs based on your current mood and listening history. Real-time recommendations create a frictionless journey of discovery, leveraging machine learning models trained on user behavior and content features to curate personalized experiences in real-time. This translates to longer session durations, increased page views, and deeper user engagement. Consider these technologies:

Content-based filtering techniques: Utilizing natural language processing (NLP) techniques like word embeddings and topic modeling (e.g., Gensim) can extract rich features from content, enabling personalized recommendations based on item attributes and user preferences.

Collaborative filtering algorithms: Explore advanced techniques like matrix factorization with implicit feedback (e.g., FunkSVD) to capture implicit user preferences from interactions beyond explicit ratings.

Conversion: By presenting users with items they genuinely want in the moment, real-time personalization leads to increased click-through rates and higher conversion rates, boosting ROI and bottom-line metrics for businesses. Imagine a recommendation engine analyzing a user's past purchases and current browsing behavior, then suggesting complementary items with high conversion potential, ultimately leading to a successful purchase. Consider these technologies:

Hybrid recommender systems: Combine multiple recommendation techniques (e.g., item-based CF, user-based CF, content-based) using methods like weighted blending or matrix factorization fusion (e.g., YaMF) to create robust and accurate recommendations tailored to individual users in real-time.

Bandit algorithms: Leverage techniques like the Epsilon-Greedy algorithm to explore new items and exploit successful recommendations, optimizing conversion rates through A/B testing and online learning.

Trend Spotter on Patrol: Real-time recommendations can identify emerging trends and user interests in real-time, allowing businesses to adapt their offerings and stay ahead of the curve. Picture a system analyzing social media data and user interactions, then recommending trending products or content before they explode in popularity, giving businesses a valuable edge in a competitive market. Consider these technologies:

Real-time trend analysis tools: Explore tools like Apache Spark Streaming and Apache Flink to analyze real-time user behavior and identify emerging trends with low latency.

Graph-based recommendation systems: Utilize graph-based techniques like Personalized PageRank to model relationships between users and items, uncovering hidden connections and trends in user behavior.

Some Hybrid Recommendation Architectures

Weighted Blending: Combine the outputs of multiple recommenders (e.g., item-based CF, user-based CF, content-based) using learned weights based on historical performance or online learning techniques. This simple approach offers flexibility and interpretability.

Matrix Factorization Fusion: Decompose user-item interaction matrices from different recommendation techniques (e.g., item-based CF, user-based CF) into shared latent factors, capturing both user and item features in a unified representation. This approach achieves better accuracy and leverages shared information across methods.

Cascading Hybrids: Utilize a multi-stage approach where one recommender's output feeds into another. For example, use content-based filtering to generate candidate items, then apply user-based CF for final recommendations, leveraging both item attributes and user preferences. This approach can personalize recommendations based on specific content features.

Optimizing Your Real-Time Engine:

Online Learning: Continuously update models with new data using techniques like Stochastic Gradient Descent (SGD) or Adam, ensuring recommendations reflect the latest user behavior and trends.

Model Distillation: Train smaller, faster models from larger, more accurate models, enabling low-latency recommendations without sacrificing performance. Consider libraries like TensorFlow Model Garden for pre-trained models and distillation techniques.

Incremental Updates: Update models partially instead of retraining from scratch, reducing computational cost and maintaining responsiveness in real-time scenarios. Explore libraries like Faiss for incremental updates in item-based CF and frameworks like TensorFlow Recommenders for user-based CF updates.

Data Pipelines and Infrastructure:

Real-time data ingestion frameworks: Apache Kafka, Apache Flink, and Flume handle high-volume, low-latency data streams like user actions and clickstream data.

Distributed computing platforms: Spark and Ray enable parallel processing and model training on large datasets,crucial for real-time scalability.

Low-latency storage solutions: Redis and Memcached store frequently accessed data (e.g., user profiles, item features) in-memory for lightning-fast retrieval and personalization.

Evaluating and Testing:

Track the effectiveness of your real-time recommendations using relevant metrics:

NDCG@K: Measures ranking quality considering relevance and user preferences within the top K recommendations.

Recall@K: Measures the proportion of relevant items retrieved within the top K recommendations.

Conversion Rate: Ultimately, the impact on business goals like purchase rate or engagement duration matters most.

A/B testing is crucial for comparing different recommendation strategies and measuring real-world impact:

Randomly split users into groups receiving different recommendation strategies.

Track key metrics across groups to identify statistically significant improvements.

Continuously iterate and refine based on A/B testing results.

It has also a good list of references that you can read more about the other articles around the same subject.

Flashinfer is a library that I shared in the last week’s newsletter and it has a nice blog post this week that goes into much more detail about the library and key technical innovations that it brings to the LLM serving.

Large Language Models demand computational requirements that pose challenges for efficient serving. FlashInfer emerges as a powerful open-source library focused on optimizing attention kernels, the lifeblood of LLM performance.

Attention and its Bottlenecks:

At the heart of LLMs lies attention, a mechanism that allows the model to focus on relevant parts of an input sequence. Three crucial stages exist in LLM serving:

Prefill: Computes attention between all queries and the Key-Value (KV) cache.

Decode: Computes attention for one query at a time based on generated tokens.

Append: Computes attention after adding new tokens to the KV cache.

Each stage has its own operational intensity, reflecting the balance between compute and memory access. Understanding these intensities is crucial for optimization. However, traditional implementations often suffer from several bottlenecks:

Inefficient Memory Utilization: Attention kernels can underutilize GPU memory bandwidth, limiting performance.

Compute-bound Scenarios: When the query length is small, attention becomes compute-bound, not fully utilizing available compute resources.

Compressed/Quantized KV-Cache: Techniques like pruning and quantization reduce memory footprint but require specialized attention kernels.

FlashInfer Tackles the Challenges through some clever optimizations:

Split-K Trick: This innovative technique splits the KV cache on the sequence dimension, increasing parallelism and utilizing more GPU cores.

Fused-RoPE Attention: FlashInfer incorporates Rotary Positional Embeddings (RoPE) directly into the attention kernel, eliminating pre-computation overhead.

Low-precision Attention: Lower-precision formats like 8-bit (FP8) are leveraged for significant speedups with minimal accuracy loss.

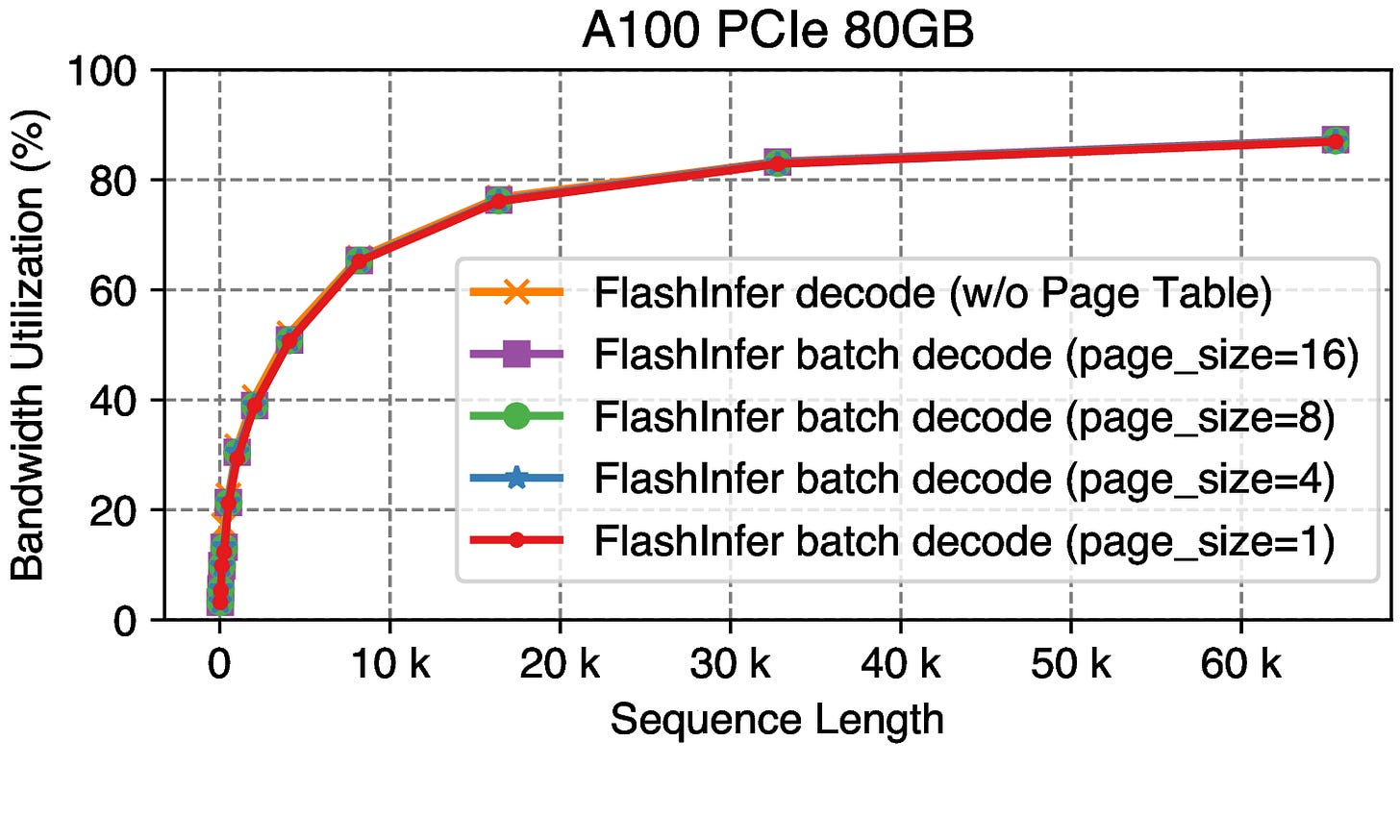

PageAttention Optimizations: Pre-fetching page indices minimizes the performance impact of page size in Paged KV-Cache scenarios.

Benefits and Performance Gains:

Comprehensive Attention Kernels: FlashInfer offers state-of-the-art kernels for various LLM serving use cases, including single-request and batching, covering all common KV-Cache formats.

Boosted Shared-Prefix Batch Decoding: For long prompts and large batch sizes, FlashInfer achieves remarkable speedups of up to 31x compared to baseline implementations.

Accelerated Attention for Compressed/Quantized KV-Cache: FlashInfer supports Grouped-Query Attention, Fused-RoPE Attention, and Quantized Attention, enabling efficient serving with compressed caches.

Benchmarks:

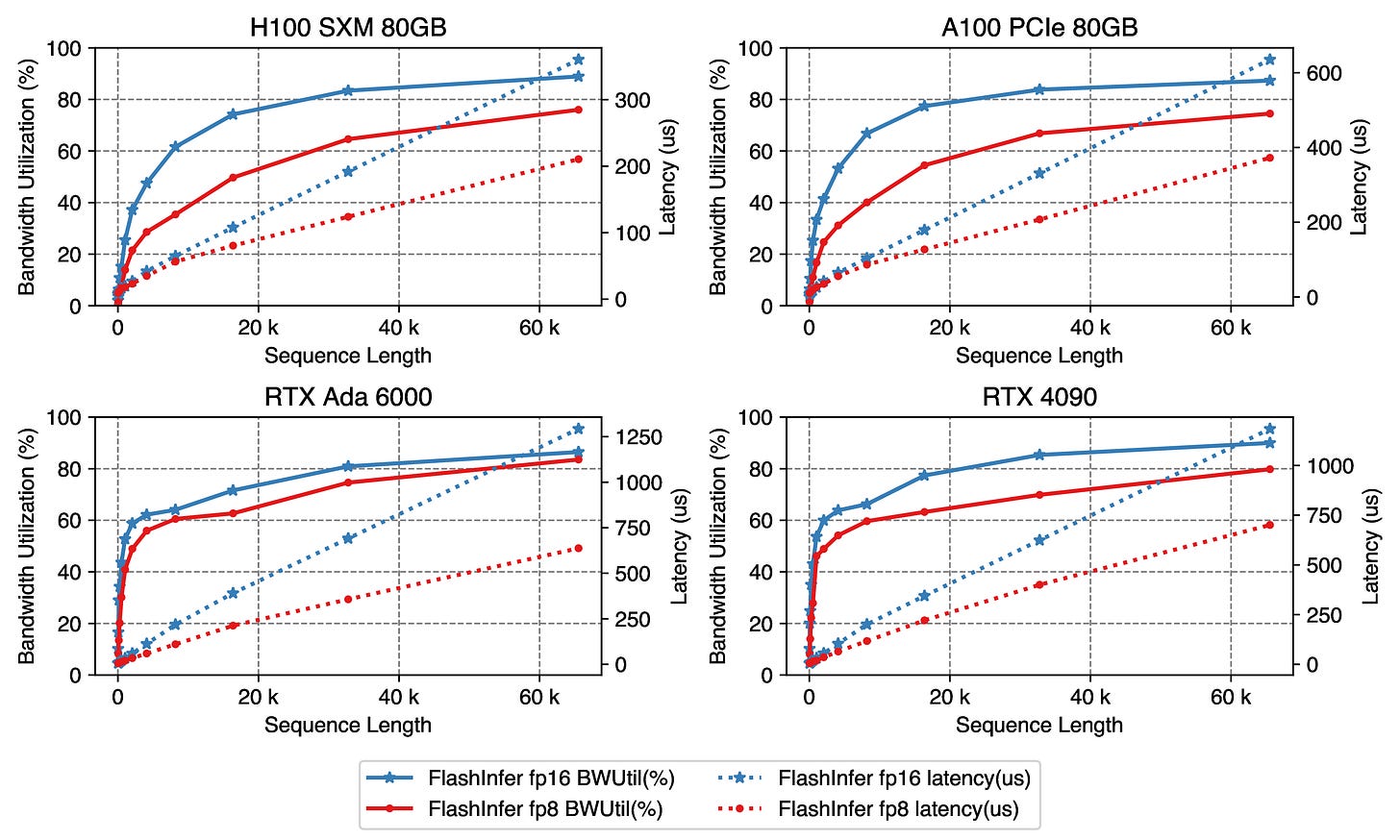

Hardware Variety: Tested on diverse GPUs including H100, A100, RTX 6000 Ada, and RTX 4090, capturing performance across different architectures.

Software Comparisons: Benchmarks against FlashAttention 2.4.2 and vLLM v0.2.6 showcase the improvements achieved by FlashInfer.

Detailed Metrics: TFLOPs/s for prefill and append kernels, and GPU memory bandwidth utilization for decode and append kernels provide comprehensive performance assessment.

Key Benchmark Results:

Prefill kernels: FlashInfer outperforms competitors on all GPUs, with further gains on RTX 4090 using

allow_fp16_qk_reduction.Decode & Append optimizations: Split-K significantly improves performance, especially for compute-bound scenarios. FlashInfer PageAttention outshines vLLM PageAttention across various batch sizes and sequence lengths.

Grouped-Query Attention: FlashInfer (Tensor Cores) demonstrates superior performance compared to FlashAttention 2.4.2 on A100 and H100.

Fused-RoPE Attention: RoPE incurs negligible overhead on all GPUs, maintaining performance.

Low-precision Attention: FP8 kernels achieve up to 2x speedup compared to FP16, demonstrating the benefits of reduced precision.

Page size impact on PageAttention: FlashInfer's prefetching mitigates the performance impact of varying page sizes.

Future Roadmap:

AMD and Apple GPU Support: Expanding beyond NVIDIA GPUs to cover a wider hardware landscape, making FlashInfer accessible to a broader community.

4-bit Fused Dequantize+Attention Operators and LoRA Operators: Integrating cutting-edge techniques for further performance gains and efficiency improvements.

Performance Optimization on New GPU Architectures: Keeping pace with the rapid evolution of hardware by optimizing FlashInfer for the latest and upcoming GPUs.

New Operators from Emerging LLM Architectures: Adapting and incorporating operators relevant to new and innovative LLM designs as they emerge.

Libraries

DeepSparse is a CPU inference runtime that takes advantage of sparsity to accelerate neural network inference. Coupled with SparseML, our optimization library for pruning and quantizing your models, DeepSparse delivers exceptional inference performance on CPU hardware.

It has the following key technical advantages:

sparse kernels for speedups and memory savings from unstructured sparse weights.

8-bit weight and activation quantization support.

efficient usage of cached attention keys and values for minimal memory movement.

promptbase is an evolving collection of resources, best practices, and example scripts for eliciting the best performance from foundation models like `GPT-4`.

GlotLID, an open-source language identification model with support for more than 1600 languages.