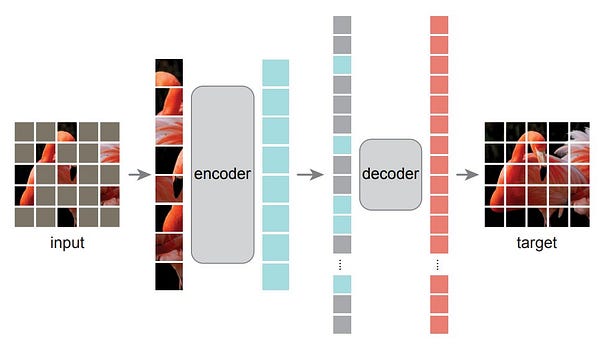

An excellent thread on patch-based self-supervision:

Articles

Reddit wrote about the inference/serving layer of their ML infrastructure in here. The post talks about their previous stack and how they rewrote the entire stack in a new service called Gazette.

Some of the goals of rewrite:

Improve the scalability of the system

Deploy more complex models

Have the ability to better optimize individual model performance

Improve reliability and observability of the system and model deployments

Improve the developer experience by distinguishing model deployment code from ML platform code

Google wrote a blog post that accompanies a paper that shows the failure mode for underspecification. TLDR for underspecification is the following:

… while ML models are validated on held-out data, this validation is often insufficient to guarantee that the models will have well-defined behavior when they are used in a new setting.

The blog post also shows a variety of different examples for underspecification examples:

Natural Language Processing: They showed that on a variety of NLP tasks, underspecification affected how models derived from BERT-processed sentences. For example, depending on the random seed, a pipeline could produce a model that depends more or less on correlations involving gender (e.g., between gender and occupation) when making predictions.

Acute Kidney Injury (AKI) prediction: They showed that underspecification affects reliance on operational versus physiological signals in AKI prediction models based on electronic health records.

Polygenic Risk Scores (PRS): They showed that underspecification influences the ability for (PRS) models, which predict clinical outcomes based on patient genomic data, to generalize across different patient populations.

Torchmetrics published a new version and they have a good blog post that explains all of the new features in here.

AISummer wrote about Transformers in computer vision in here. It is a great overview of various transformers architectures:

Looking for new “self-attention” blocks (XCIT)

Looking for new combinations of existing blocks and ideas from NLP (PVT, SWIN)

Adapting ViT architecture to a new domain/task (i.e. SegFormer, UNETR)

Forming architectures based on CNN design choices (MViT)

Studying scaling up and down ViTs for optimal transfer learning performance.

Searching for suitable pretext task for deep unsupervised/self-supervised learning (DINO)

Pinterest wrote about their search architecture in this blog post. They built a model that explicitly produced query embeddings compatible with this Pin embedding space from raw text would perform better than the former approach, and this was validated through online experiments, showing increases in search engagement and relevance.

PyTorch Lightning wrote about how to implement much more complex and advanced for-loops in the training to enable various training methods.

Libraries

PyTorch-Model-Compare is a library to compare two models in PyTorch.

There are many ways to compare two neural networks, but one robust and scalable way is using the Centered Kernel Alignment (CKA) metric, where the features of the networks are compared.

Centered Kernel Alignment (CKA) is a representation similarity metric that is widely used for understanding the representations learned by neural networks. Specifically, CKA takes two feature maps / representations X and Y as input and computes their normalized similarity (in terms of the Hilbert-Schmidt Independence Criterion (HSIC)) as

Einshape is Python library that provides a DSL-based unified interface to matmul and tensordot ops. This einshape library is designed to offer a similar DSL-based approach to unifying reshape, squeeze, expand_dims, and transpose operations.

Notebooks

HuggingFace has a very good notebook on audio classification using transformers in here.

Tutorials

Getting Started with Jax is a good introductory tutorial.