Libraries

Cachew is a multi-tenant service for efficient input data processing in machine learning jobs.

To minimize end-to-end training time and cost, Cachew jointly optimizes:

elastic, distributed resource allocation for input data processing and

input data caching and materialization of preprocessed data within and across jobs.

Cachew builds on top of the tf.data data loading framework in TensorFlow, extending tf.data service with autoscaling and autocaching policies.

The paper is in here.

Deepseed is a simple library that compares seeds for experimentations results. The report is here and tries to follow the following questions:

What is the distribution of scores with respect to the choice of seed?

Are there black swans, i.e., seeds that produce radically different results?

Does pretraining on larger datasets mitigate variability induced by the choice of seed?

Neighborhood Attention Transformer (NAT), an efficient, accurate and scalable hierarchical transformer that works well on both image classification and downstream vision tasks. It is built upon Neighborhood Attention (NA), a simple and flexible attention mechanism that localizes the receptive field for each query to its nearest neighboring pixels. NA is a localization of self-attention, and approaches it as the receptive field size increases. It is also equivalent in FLOPs and memory usage to Swin Transformer's shifted window attention given the same receptive field size, while being less constrained.

Articles

Apple wrote about the Privacy-Preserving Machine Learning Workshop 2022 in a blog post.

They covered the following topics in the post(some of the subsections are videos from workshop and some of them are papers):

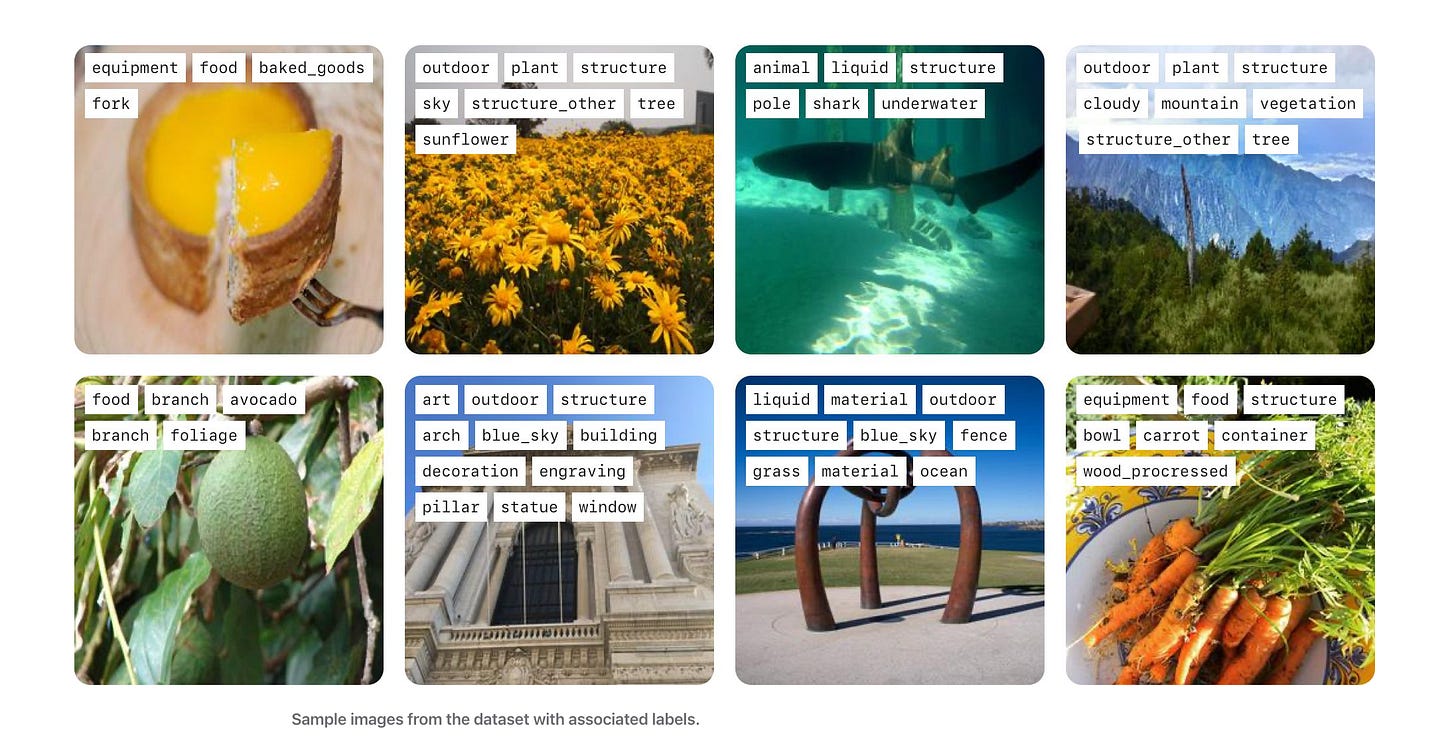

Federated Learning Annotated Image Repository (FLAIR) Dataset for PPML Benchmarking

FLAIR Dataset can be found in GitHub.

ML with Privacy

Learning Statistics with Privacy (Differential Privacy)

Trust Models, Attacks, and Accounting

Cryptographic Primitives

OpenAI wrote about what type of mitigation strategies that they adopted in pre-training of the Dall-E.

They described how we filtered out violent and sexual images from DALL·E 2’s training dataset. Without this mitigation, the model would learn to produce graphic or explicit images when prompted for them, and might even return such images unintentionally in response to seemingly innocuous prompts.

They find that filtering training data can amplify biases, and describe their technique to mitigate this effect. For example, without this mitigation, they noticed that models trained on filtered data sometimes generated more images depicting men and fewer images depicting women compared to models trained on the original dataset.

They turned to the issue of memorization, finding that models like DALL·E 2 can sometimes reproduce images they were trained on rather than creating novel images. In practice, they found that this image regurgitation is caused by images that are replicated many times in the dataset, and mitigate the issue by removing images that are visually similar to other images in the dataset.

Classes

MIT released a new class about Deep Learning for Art, Aesthetics, and Creativity in here.