Pinterest launches a new Transformer + DCNv2 recommender model through GPU Inference

Jax Deployment, HiveMind, DiscoArt

I have enjoyed the following interview last week from Demis Hassabis:

Articles

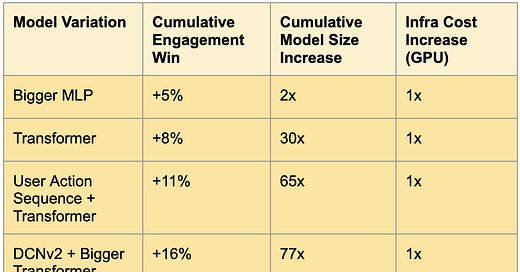

Pinterest launched a new recommender model through utilizing GPU inferencing and talked about various techniques in the blog post.

They have seen a good amount of success using Transformer model and using DCNv2 and sequential modeling, they have been seeing a large amount of engagement wins.

Google built a new Transformer based code complete completer and explained in detail in this blog post. You have probably seen CoPilot from GitHub or Codex/Jigsaw model that we have covered in:

In this blog post, they talk about how they trained in their mono repo and how it is deployed(TPU servicing the model), and what type of programming languages that they cover in terms of code completion support.

Tensorflow team had a series of nice video posts on how to deploy production models through Tensorflow serving. Tensorflow serving supports(experimental) Jax models as well and you can watch that video in here, too.

Libraries

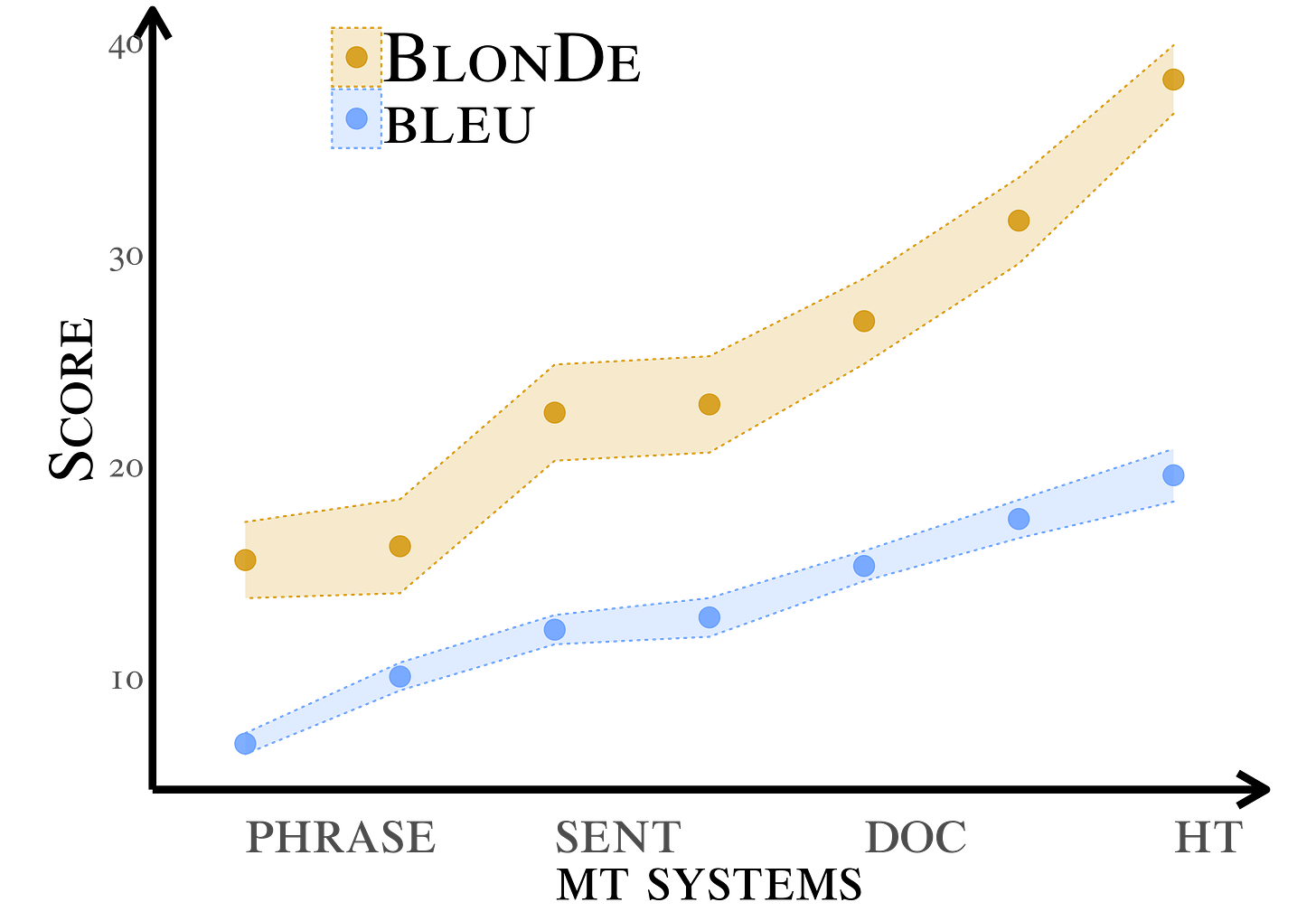

BlonDe and BWB are developed for document-level machine translation. BlonDe is an automatic evaluation metric that explicitly tracks discourse phenomena. BWB is a large-scale bilingual parallel corpus that consists of web novels.

Standard automatic metrics, e.g. BLEU, are not reliable for document-level MT evaluation. They can neither distinguish document-level improvements in translation quality from sentence-level ones, nor identify the discourse phenomena that cause context-agnostic translations.

BlonDe is proposed to widen the scope of automatic MT evaluation from sentence to the document level. It takes discourse coherence into consideration by categorizing discourse-related spans and calculating the similarity-based F1 measure of categorized spans.

Hivemind is a PyTorch library for decentralized deep learning across the Internet. Its intended usage is training one large model on hundreds of computers from different universities, companies, and volunteers.

Distributed training without a master node: Distributed Hash Table allows connecting computers in a decentralized network.

Fault-tolerant backpropagation: forward and backward passes succeed even if some nodes are unresponsive or take too long to respond.

Decentralized parameter averaging: iteratively aggregate updates from multiple workers without the need to synchronize across the entire network (paper).

Train neural networks of arbitrary size: parts of their layers are distributed across the participants with the Decentralized Mixture-of-Experts (paper).

DiscoArt is an elegant way of creating compelling Disco Diffusion[*] artworks for generative artists, AI enthusiasts and hard-core developers. DiscoArt has a modern & professional API with a beautiful codebase, ensuring high usability and maintainability. It introduces handy features such as result recovery and persistence, gRPC/HTTP serving w/o TLS, post-analysis, easing the integration to larger cross-modal or multi-modal applications.

Classes

CS25: Transformers United is a graduate level class that talks about Transformers model architecture in detail. The schedule has a number of good readings that use various classes as paper reading sessions. The videos of the classes are available in YouTube.

MIT has Deep Learning for Art, Aesthetics, and Creativity that talks a variety of deep learning models and how they can be used to create various forms of art.

Harvard has an introduction to data science class that goes over deep learning methods and basic introductory mathematical foundations.

Conferences

Stanford has AI+Health online conference where the Stanford Institute for Human-Centered Artificial Intelligence (HAI), Artificial Intelligence in Medicine and Imaging (AIMI), and Center for Continuing Medical Education (CME) are convening experts and leaders from academia, industry, government, and clinical practice to explore critical and emerging issues related to AI's impact across the spectrum of healthcare. Content will be relevant to practitioners, researchers, executives, policymakers, and professionals, with and without technical expertise.

Apply Conf has a number of videos that are available on demand. If you are interested in ml and data engineering, definitely worth check it out!

Interpretable Machine Learning in Natural and Social Sciences made their videos available in their website.

The workshop’s aim is:

This workshop will convene an interdisciplinary group of scholars to inspire clear foundational formulations of interpretability in a variety of domains where questions of interpretability arise in the application of machine learning, statistics, and data science more broadly. The attendees will include scholars from both the natural sciences — including precision medicine and the physical, biological and neuroscience sciences, and the social sciences — including political science, economics, and law, together with machine learners, statisticians, and data scientists. Across these domains, the term "interpretability" is often overloaded to speak to such disparate concerns as assisting in model checking, comparing extracted patterns against domain knowledge, extracting insights and generating hypotheses, anticipating failures on out-of-domain data, and providing accountability and contestability to individuals subject to data-driven decision-making.

Papers

Machine Learning Operations (MLOps): Overview, Definition, and Architecture talks about what MLOps is in a broad terms.

The authors conduct mixed-method research, including a literature review, a tool review, and expert interviews. As a result of these investigations, the paper provides an aggregated overview of the necessary principles, components, and roles, as well as the associated architecture and workflows.

Deep Learning Interviews: Hundreds of fully solved job interview questions from a wide range of key topics in AI has a number of deep learning interview questions that span from theory to system design interviews.