Hi,

As I have covered Pathways ML Infra component last week, Google published ML Modeling paper as well. The first article is in the below, that is that.

OpenAI released a new version for DallE this week and make sure to check that out as well.

Articles

Google published Pathways Modeling paper and announced this in a blog post. If you remember from the last week, I covered the ML infra oriented paper in the previous newsletter.

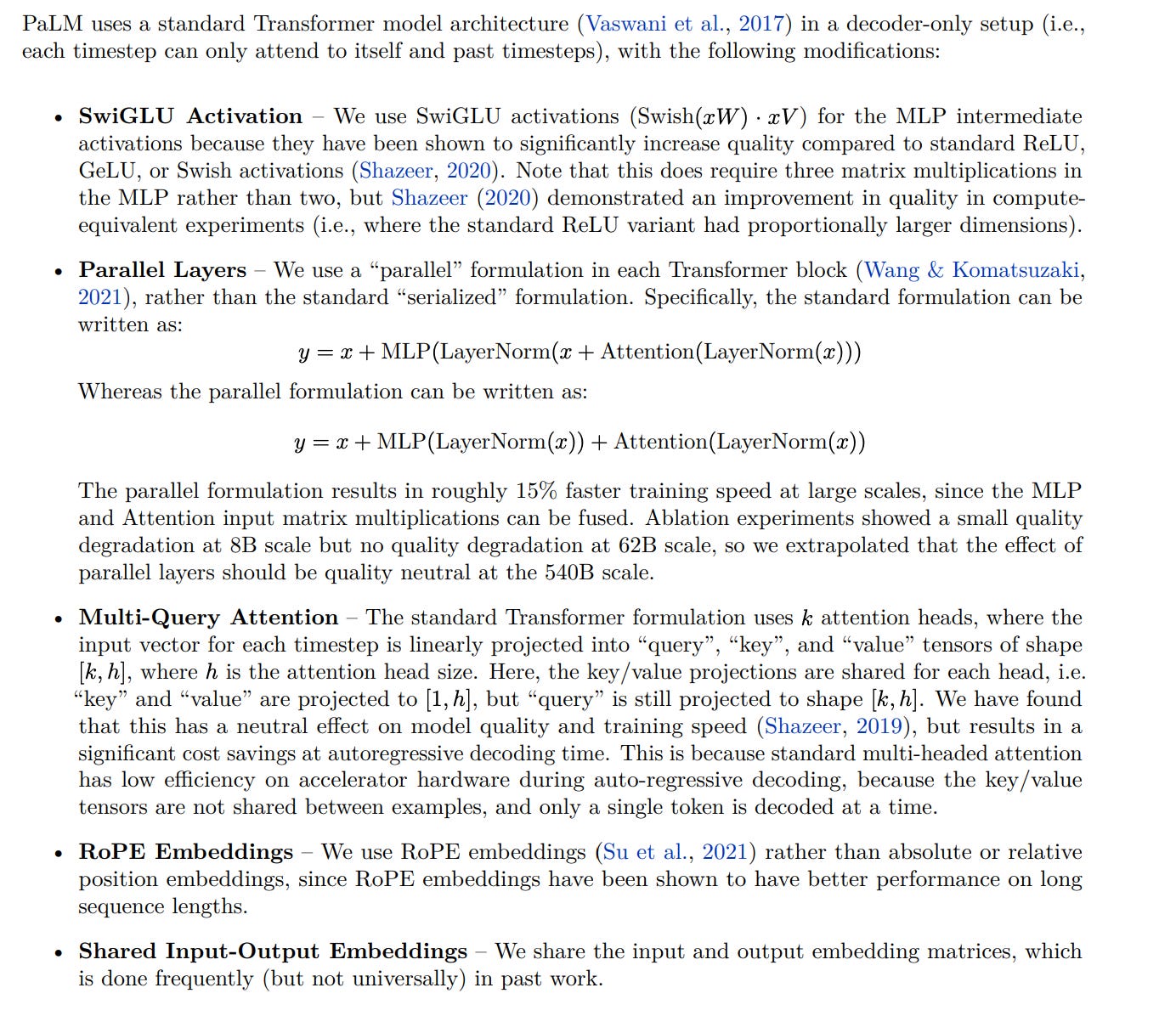

Their architecture is very similar to the Transformer architecture with a number of modifications like using SwiGLU activation function, no bias usage and leveraging RoPE embeddings. Its PyTorch implementation is here from another group.

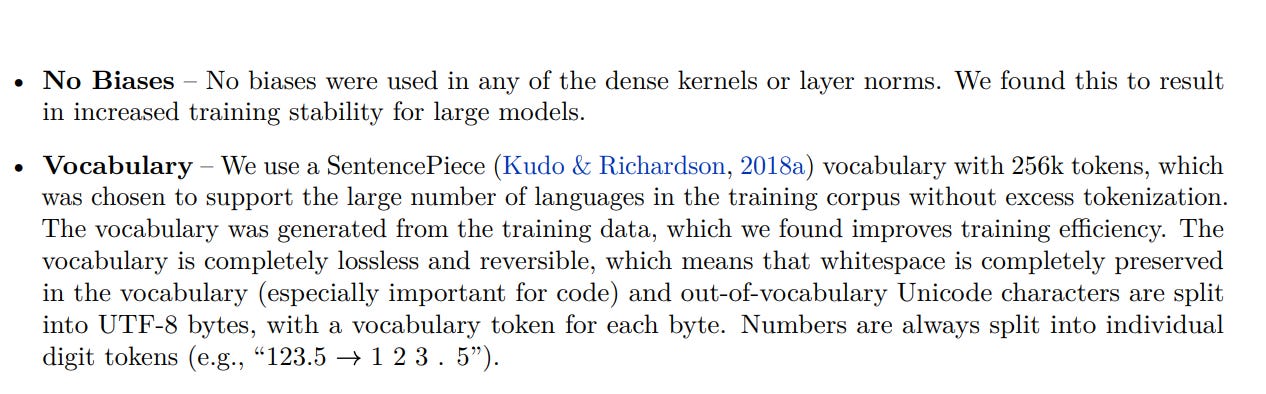

OpenAI released Dall-E version number 2, Dall-E-2. This version is even better and create much more realistic images through text prompting.

The paper is in here and research has its own instagram page as well.

Laion released a very large scale multimodal dataset and announced through a blog post. This dataset has 5.85B image-text pairs, but they are not all manually checked whether all of the image-text pairs are validated. They also released a knn-index for the image data in here as well.

Stanford researchers published a blog post announcing a very good book on algorithms for decision making process. The book is available in here.

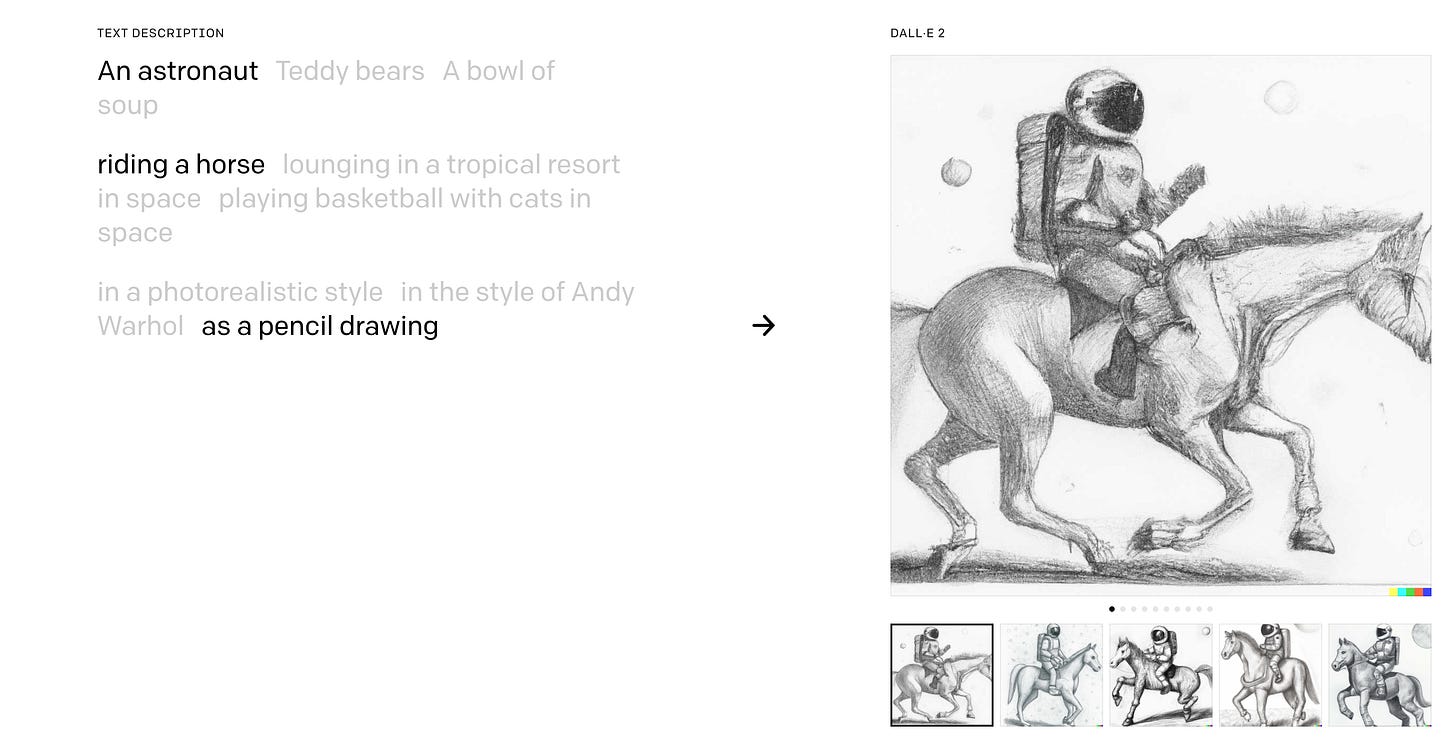

PyTorchLightning released couple of new APIs and renamed their previous Plugin API into Strategy and wrote a good comprehensive blog post.

Libraries

ML Foundations has a library called OpenCLIP, this is the open-source version of the famous CLIP that was open-sourced by OpenAI.

SalesForce released CodeGen library that allows you to do program synthesis. The models were trained on TPU-v4. Competitive with OpenAI Codex.

Videos

Deepak Narayanan talks about resource efficient execution of deep learning computations:

He covers:

Heteregenous computing

Various Scheduling mechanisms

Some of the work that is being done in data and model parallelism

Ellie Pavlick went through implementing rules and symbols through deep learning models and tries to answer the following question: Can neural networks (without any explicit symbolic components) nonetheless implement symbolic reasoning at the computational level?

and goes through multiple tests of symbolic and rule-governed whether we can actually test neural network around these properties.

She concludes in certain cases neural models appear to encode symbol-like concepts (e.g., conceptual representations that are abstract, systematic, and modular) in the neural networks in the following video: