LLM Inference Made Easy, TSMixer for Time Series

Which inference framework to use to host your LLM?

Articles

Databricks wrote up a rather long article on how to optimize LLM inference to make the inference more efficient and introduce less latency.

Some of the important points from this article are that memory bandwidth is key for LLM inference and that batching is critical for achieving high throughput.

Some of the challenges that post goes in detail:

Memory bandwidth: LLMs are very large models, and they can require a lot of memory to run. This can be a challenge for inference, as it can be difficult to fit the entire model in memory on a single device.

Latency: LLMs can also be slow to run, especially for large inputs. This can be a challenge for applications that require real-time responses.

Some of the best practices that post gives a lot of detail:

Batching: Batching is a technique where multiple inputs are processed together. This can help to improve throughput by reducing the number of times the model needs to be loaded and initialized.

Quantization: Quantization is a technique where the weights of a model are reduced in precision. This can help to reduce the memory footprint of the model and improve performance on some hardware platforms.

Model parallelism: Model parallelism is a technique where the model is split up and processed across multiple devices. This can help to improve performance and scalability.

The article also discusses a number of other best practices, such as using the right hardware and software stack and optimizing the model architecture.

Google wrote a blog post on the advantages of univariate linear models for time series forecasting and proposes a new multivariate model called TSMixer that leverages these advantages. TSMixer is an all-MLP architecture that consists of a stack of MLP layers, each of which is followed by a residual connection and a layer normalization. They show that TSMixer can achieve state-of-the-art results on long-term forecasting benchmarks, even when compared to more complex multivariate models that incorporate cross-variate information.

In contrast to univariate time series forecasting, where the goal is to predict the future values of a single time series, multivariate time series forecasting requires predicting the future values of multiple time series that are interrelated. This can be a difficult task, as the relationships between the different time series can be complex and time-varying. After they motivate the problem, they talk about the advantages of univariate linear models for time series forecasting. Univariate linear models are simple and easy to train, and they can capture the linear trends and seasonality that are often present in time series data. Additionally, univariate linear models are robust to noise and outliers. They then propose TSMixer, a new multivariate time series forecasting model that is based on univariate linear models. TSMixer is an all-MLP architecture that consists of a stack of MLP layers, each of which is followed by a residual connection and a layer normalization. The residual connections help to mitigate the vanishing gradient problem, while the layer normalization helps to stabilize the training process.

They evaluate TSMixer on a variety of long-term forecasting benchmarks and they show that TSMixer can achieve state-of-the-art results on these benchmarks, even when compared to more complex multivariate models that incorporate cross-variate information.

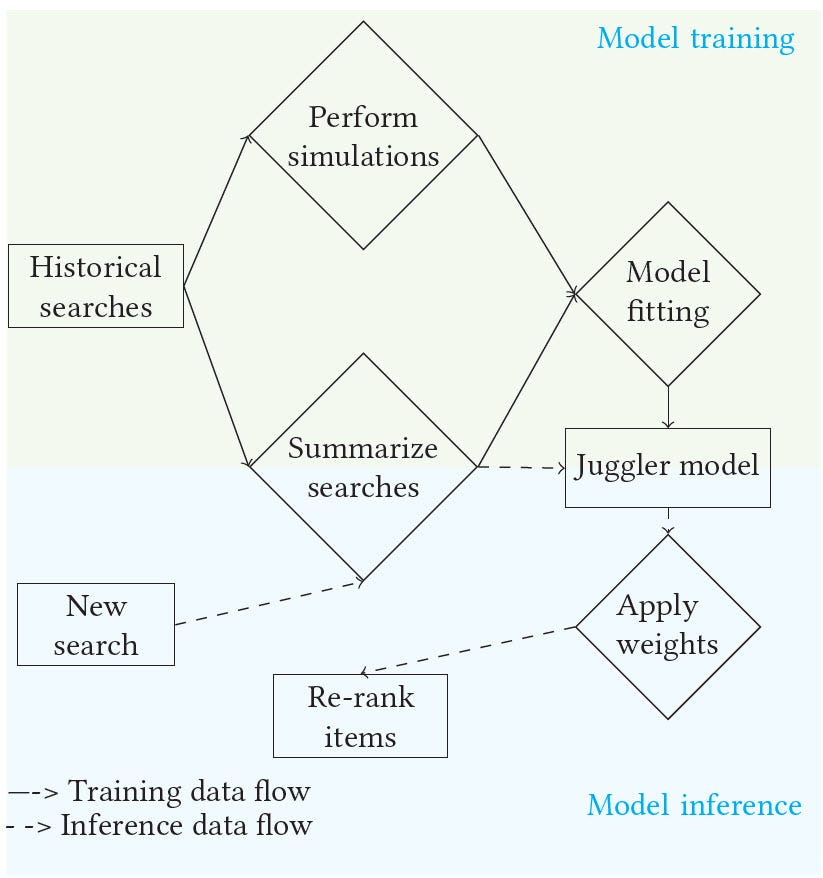

Expedia wrote an article on their search ranking system called Juggler. It is used to rank hotels based on the needs of both travelers and the business. Juggler takes into account many factors, such as the price of the hotel, the traveler's preferences, and the overall health of the marketplace. The goal is to find a balance between satisfying everyone involved.

It uses the following features:

The price of the hotel

The traveler's preferences

The overall health of the marketplace

and the following machine learning models, including:

Linear regression: Linear regression is a statistical method that is used to model the relationship between two or more variables. In the context of the Juggler model, linear regression is used to model the relationship between the price of the hotel and the traveler's satisfaction.

Random forests: Random forests are a type of ensemble learning method that combines the predictions of multiple decision trees. In the context of the Juggler model, random forests are used to predict the traveler's satisfaction with a hotel given the hotel's price and the traveler's preferences.

Gradient boosting machines: Gradient boosting machines are another type of ensemble learning method that combines the predictions of multiple decision trees. Gradient boosting machines are similar to random forests, but they are often more accurate. In the context of the Juggler model, gradient boosting machines are used to predict the traveler's satisfaction with a hotel given the hotel's price, the traveler's preferences, and the overall health of the marketplace.

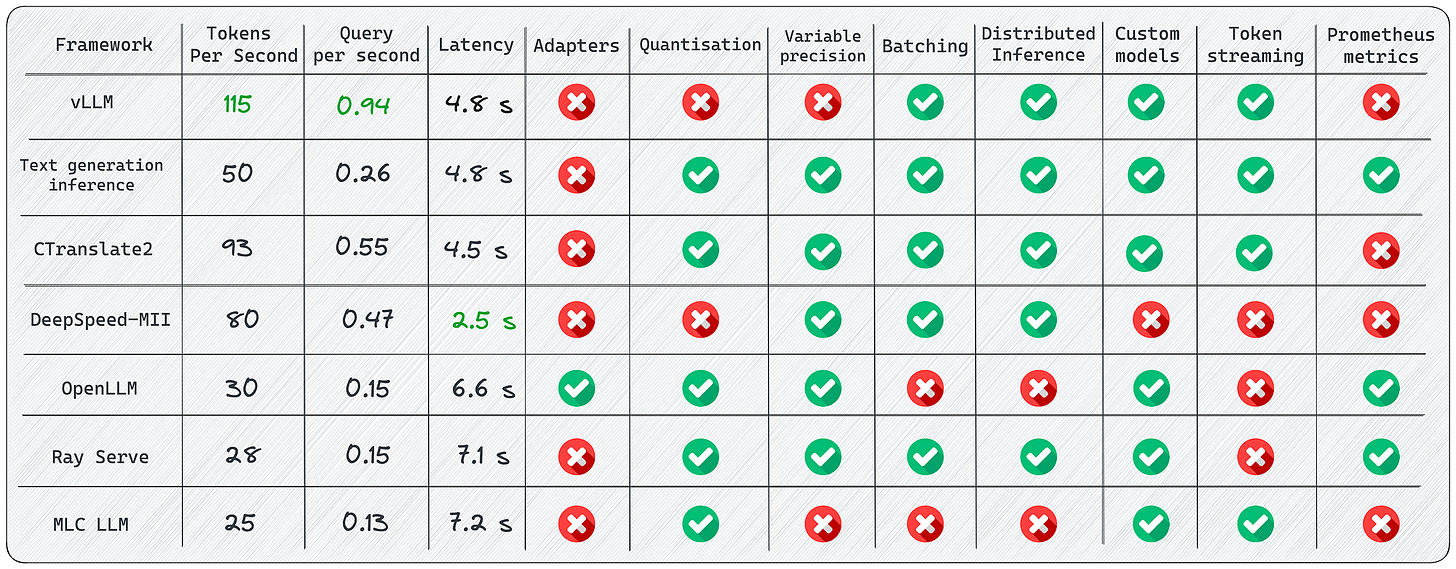

Sergei Savvov wrote an article which provides comprehensive comparison of various open-source libraries and frameworks for serving Large Language Models (LLMs) in MLOps. The author evaluates and discusses the pros and cons of these frameworks based on their real-world deployment examples. Here's a summary of the key points for each framework:

vLLM:

Fast and easy-to-use library for LLM inference and serving.

Achieves significantly higher throughput than HuggingFace Transformers and Text Generation Inference.

Notable features include continuous batching and PagedAttention.

Limitations include complexity in adding custom models and lack of support for adapters.

Text Generation Inference:

A Rust, Python, and gRPC server for text generation inference.

Used in production at HuggingFace.

Features built-in Prometheus metrics and optimized transformers code for inference.

Native support for HuggingFace models.

Limitations include the absence of official adapter support and the need to compile from source.

CTranslate2:

A C++ and Python library for efficient inference with Transformer models.

Offers fast and efficient execution on CPU and GPU.

Dynamic memory usage and support for multiple CPU architectures.

Advantages include parallel and asynchronous execution and prompt caching.

Limitations include the absence of a built-in REST server and support for adapters.

DeepSpeed-MII:

Enables low-latency and high-throughput inference powered by DeepSpeed.

Features load balancing over multiple replicas and non-persistent deployment.

Supports various model repositories and quantifying latency and cost reduction.

Limitations include the lack of official releases and support for a limited number of models.

OpenLLM:

An open platform for operating large language models in production.

Offers adapters support, runtime implementations, and HuggingFace Agents.

Good community support and integration with different model implementations.

Limitations include the lack of batching support and built-in distributed inference.

Ray Serve:

A scalable model-serving library for building online inference APIs.

Features a monitoring dashboard, autoscaling, and dynamic request batching.

Extensive documentation and production-ready capabilities.

Limitations include the need for manual model optimization and a high entry barrier.

MLC LLM:

A universal deployment solution that enables LLMs to run efficiently on consumer devices.

Offers platform-native runtimes and memory optimization.

Suitable for deploying models on iOS and Android devices.

Limitations include limited functionality for using LLM models and complicated installation.

The article concludes by highlighting that there is no single "best" framework, as each has its unique advantages and limitations. The choice of framework should depend on specific project requirements and goals.

Note that the article is written in July and may not have all of the updates that happened since August in the LLM serving domain.

Libraries

LLM as Chatbot is to let people to use lots of open sourced instruction-following fine-tuned LLM models as a Chatbot service. Because different models behave differently, and different models require differently formatted prompts, they have a simple library

Ping Pongfor model agnostic conversation and context managements.Outlines 〰 is a library for neural text generation. You can think of it as a more flexible replacement for the

generatemethod in the transformers library.Outlines 〰 helps developers guide text generation to build robust interfaces with external systems. Provides generation methods that guarantee that the output will match a regular expressions, or follow a JSON schema.

Outlines 〰 provides robust prompting primitives that separate the prompting from the execution logic and lead to simple implementations of few-shot generations, ReAct, meta-prompting, agents, etc.

Outlines 〰 is designed as a library that is meant to be compatible the broader ecosystem, not to replace it. We use as few abstractions as possible, and generation can be interleaved with control flow, conditionals, custom Python functions and calls to other libraries.

Outlines 〰 is compatible with all models. It only interfaces with models via the next-token logits. It can be used with API-based models as well.

Octopus is a novel VLM designed to proficiently decipher an agent’s vision and textual task objectives and to formulate intricate action sequences and generate executable code.

This repository provides:

Training data collection pipeline in

octogibsonenvironment,Evaluation pipeline in

octogibsonenvironment,Evaluation pipeline in

octogtaenvironment,Training pipeline of the

octopusmodel.

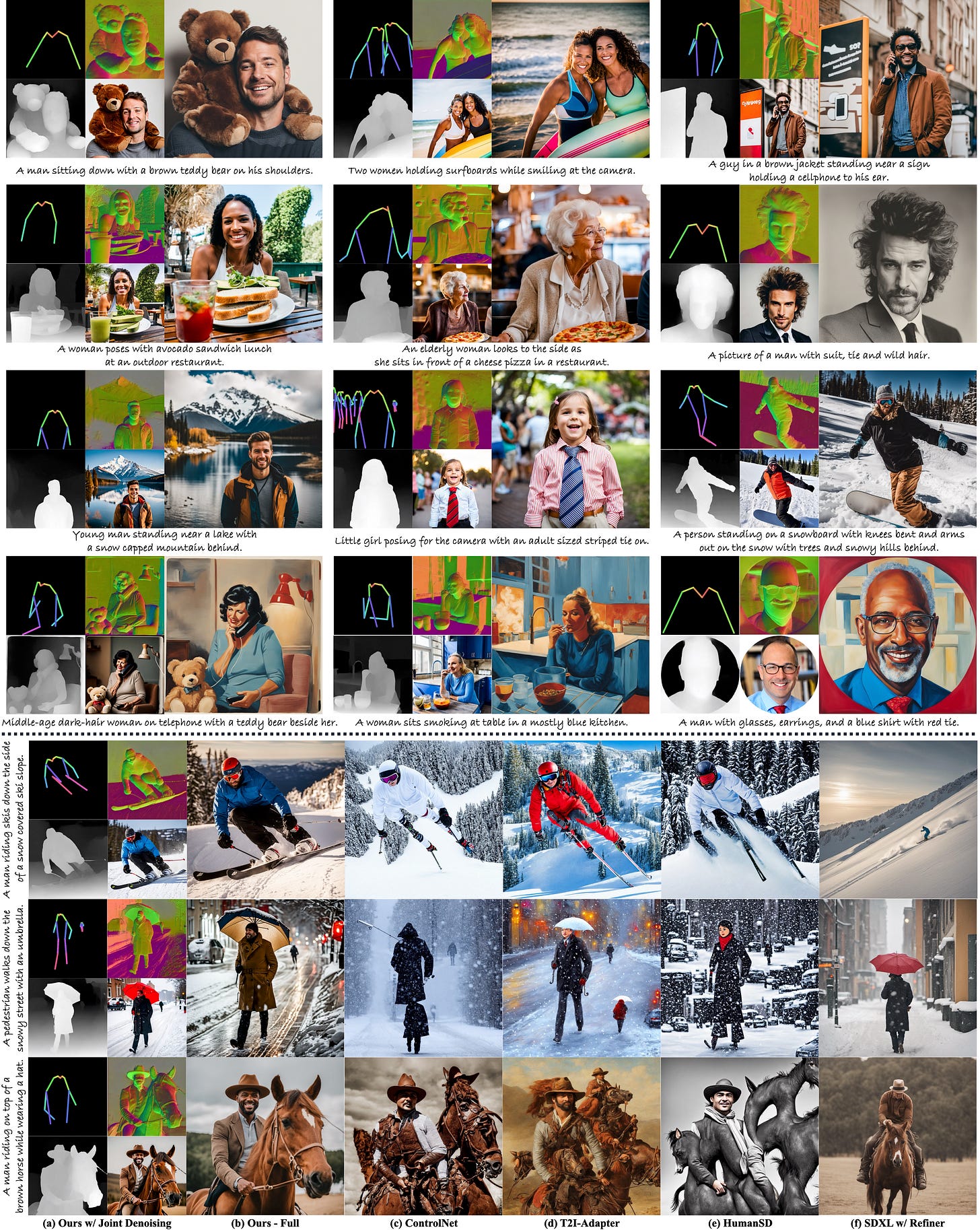

Despite significant advances in large-scale text-to-image models, achieving hyper-realistic human image generation remains a desirable yet unsolved task. Existing models like Stable Diffusion and DALL·E 2 tend to generate human images with incoherent parts or unnatural poses. To tackle these challenges, SnapResearch’s key insight is that human image is inherently structural over multiple granularities, from the coarse-level body skeleton to fine-grained spatial geometry. Therefore, capturing such correlations between the explicit appearance and latent structure in one model is essential to generate coherent and natural human images. To this end, they propose a unified framework, HyperHuman, that generates in-the-wild human images of high realism and diverse layouts. Specifically,

they first build a large-scale human-centric dataset, named HumanVerse, which consists of 340M images with comprehensive annotations like human pose, depth, and surface normal.

Next, they propose a Latent Structural Diffusion Model that simultaneously denoises the depth and surface normal along with the synthesized RGB image. Their model enforces the joint learning of image appearance, spatial relationship, and geometry in a unified network, where each branch in the model complements to each other with both structural awareness and textural richness.

Finally, to further boost the visual quality, they propose a Structure-Guided Refiner to compose the predicted conditions for more detailed generation of higher resolution. Extensive experiments demonstrate that our framework yields the state-of-the-art performance, generating hyper-realistic human images under diverse scenarios.

Conferences & Workshops

There was an excellent talk from OpenAI for LLMs:

The slides are also available in here: https://docs.google.com/presentation/d/1636wKStYdT_yRPbJNrf8MLKpQghuWGDmyHinHhAKeXY/edit#slide=id.g2885e521b53_0_0

There was a six hour workshop on Responsible and Foundation Models which I recommend highly to watch if you want to learn about how to build them using some of the best practices with regards to open source and explainability for different types of policies. The agenda of the workshop is here. The whole conference can be watched in the following video: