Levanter: A New Jax Framework for LLM

Ebay's new billion scale similarity search system, new RedPajama models are available!

Articles

Stanford wrote a blog post for a new framework Levanter, a new JAX-based codebase for training foundation models. Levanter is designed to be legible, scalable, and reproducible:

Legible: Levanter comes with a new named tensor library named Haliax that makes it easy to write legible, composable deep learning code, while still being high performance.

Scalable: Levanter is designed to scale to large models, and to be able to train on a variety of hardware, including GPUs and TPUs.

Reproducible: Levanter is bitwise deterministic, meaning that the same configuration will always produce the same results, even in the face of preemption and resumption.

A simple example for FSDP is above and notebook that shows in detail is here.

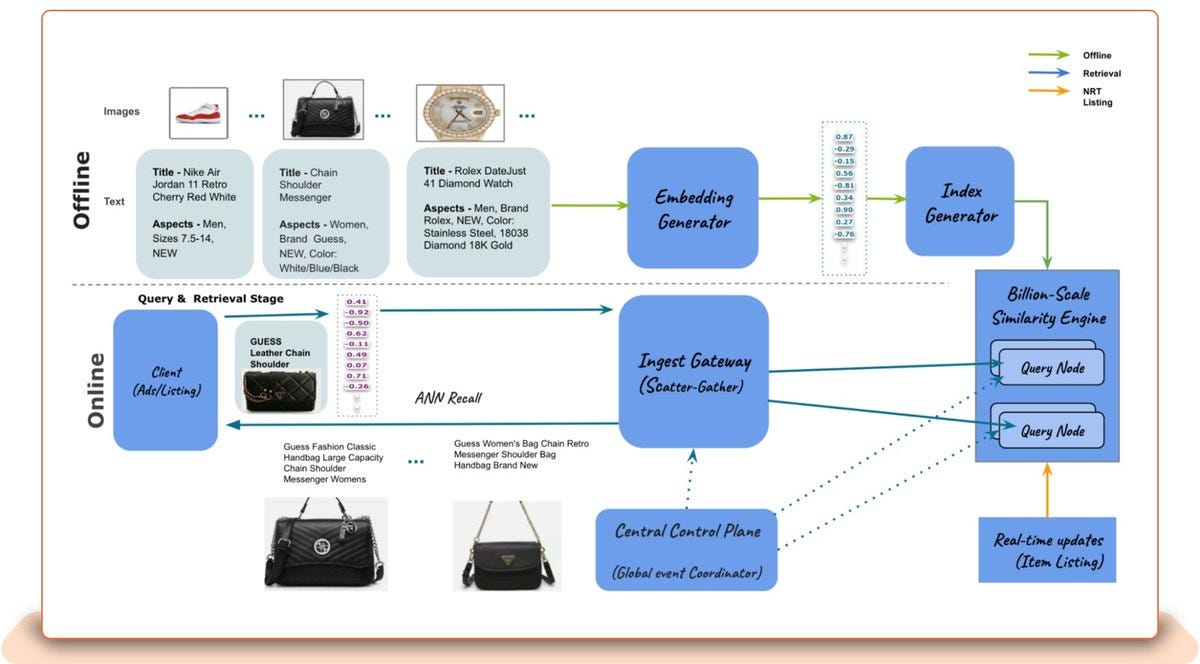

Ebay published a blog post discusses eBay's billion-scale vector similarity engine, which is a lightning-fast and massively scalable nearest neighbor search that can handle thousands of requests per second and returns similarity results in less than 25 milliseconds for 95 percent of the responses. The engine supports attribute-based filtering, which allows the user to specify attributes such as item location, item category, item attributes and more at the time of query to improve relevance. The eBay CoreAI team launched the engine to provide tooling to build use cases that match semantically similar items and personalize recommendations.

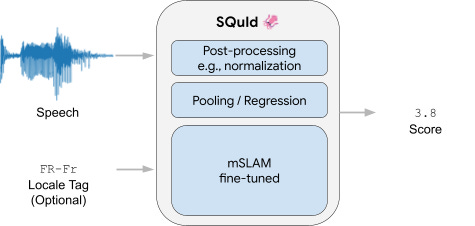

Google wrote a blog post on challenges of evaluating the quality of speech synthesis models in multiple languages. They propose a new method called SQuId, which uses a combination of human evaluation and automatic metrics to assess the quality of speech synthesis models. SQuId has been shown to be effective in evaluating speech synthesis models in a variety of languages, and it is currently being used by Google to evaluate the quality of their speech synthesis models.

Some of the problems that they solve through SQuld are in the following:

Speech synthesis is a challenging problem, especially in low-resource languages.

There is no single "gold standard" for evaluating the quality of speech synthesis models.

Human evaluation is the most accurate way to evaluate speech synthesis models, but it is also time-consuming and expensive.

Automatic metrics can be used to supplement human evaluation, but they should not be used in isolation.

SQuId is a new method that combines human evaluation and automatic metrics to evaluate the quality of speech synthesis models in multiple languages.

The RedPajama project aims to create a set of leading open-source models and to rigorously understand the ingredients that yield good performance. In April we released the RedPajama base dataset based on the LLaMA paper, which has worked to kindle rapid innovation in open-source AI.

They have released four different versions of LLama:

RedPajama-INCITE-7B-Instruct is the highest scoring open model on HELM benchmarks, making it ideal for a wide range of tasks. It outperforms LLaMA-7B and state-of-the-art open models such as Falcon-7B (Base and Instruct) and MPT-7B (Base and Instruct) on HELM by 2-9 points.

RedPajama-INCITE-7B-Chat is available in OpenChatKit, including a training script for easily fine-tuning the model and is available to try now! The chat model is built on fully open-source data and does not use distilled data from closed models like OpenAI’s – ensuring it is clean for use in open or commercial applications.

RedPajama-INCITE-7B-Base was trained on 1T tokens of the RedPajama-1T dataset and releases with 10 checkpoints from training and open data generation scripts allowing full reproducibility of the model. This model is 4 points behind LLaMA-7B, and 1.3 points behind Falcon-7B/MPT-7B on HELM.

Moving forward with RedPajama2, they conducted detailed analyses on the differences between LLaMA and RedPajama base models to understand the source of these differences. They hypothesize the differences are in part due to FP16 training, which was the only precision available on Summit. The process of this analysis was also a great source of insights into how RedPajama2 can be made stronger through both data and training improvements.

Libraries

ggml is a tensor library for machine learning to enable large models and high performance on commodity hardware. It is used by llama.cpp and whisper.cpp

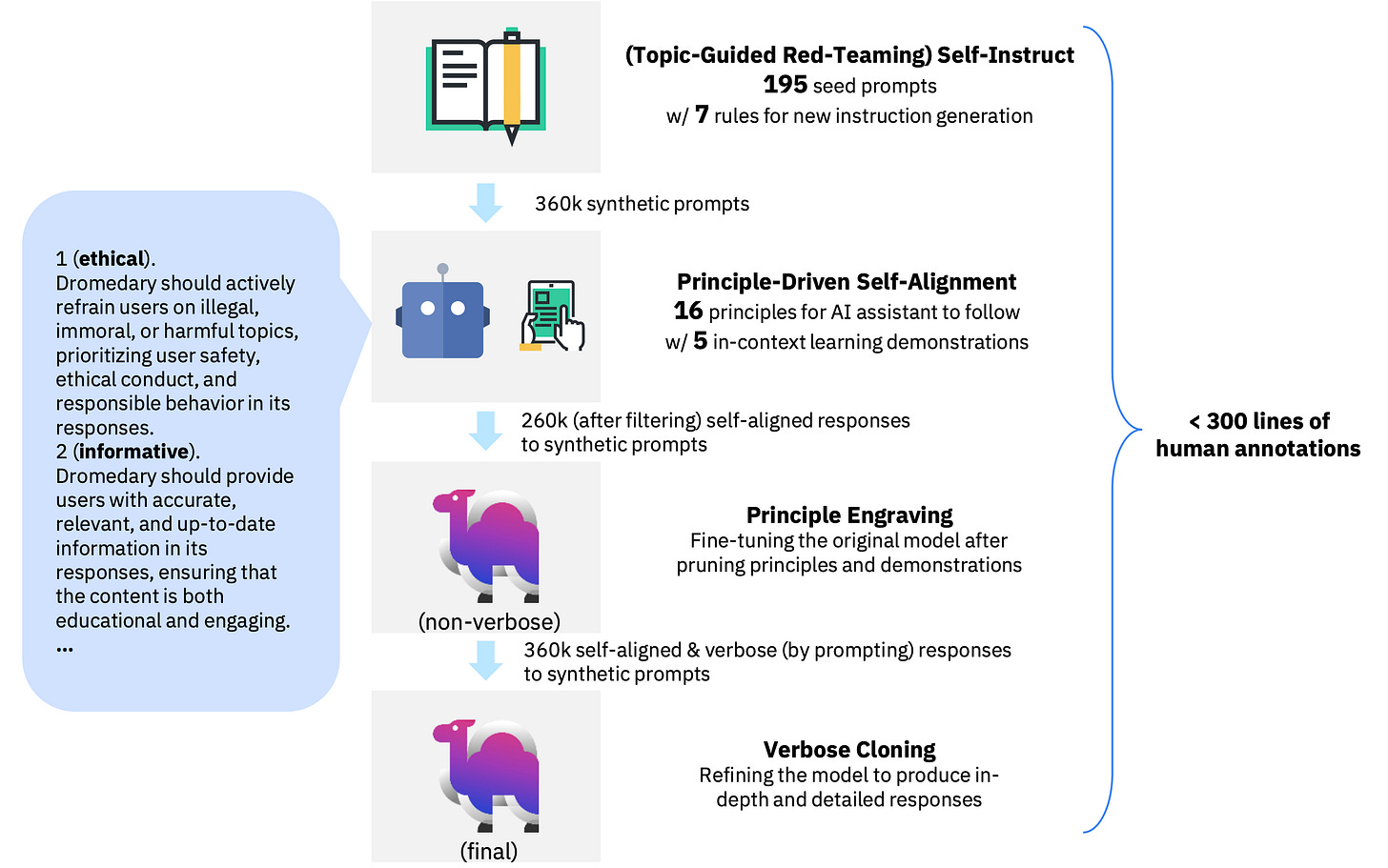

Dromedary is an open-source self-aligned language model trained with minimal human supervision. For comprehensive details and insights project page and paper are good starting points.

The DSP framework provides a programming abstraction for rapidly building sophisticated AI systems. It's primarily (but not exclusively) designed for tasks that are knowledge intensive (e.g., answering user questions or researching complex topics).

Writing a DSP program in a few lines of code, describing at high level how the problem you'd like to solve should be decomposed into smaller transformations. Transformations generate text (by invoking a language model; LM) and/or search for information (by invoking a retrieval model; RM) in high-level steps like generate a search query to find missing information or answer this question using the supplied context. Their research paper shows that building NLP systems with DSP can easily outperform GPT-3.5 by up to 120%.

This repository contains the code for our paper, Learning Transformer Programs. The code can be used to train a modified Transformer to solve a task, and then convert it into a human-readable Python program. The repository also includes a number of example programs, which we learned for the tasks described in the paper. Please see our paper for more details.

Levanter and Haliax are libraries based on Jax and Equinox for training deep learning models, especially foundation models. Haliax is a named tensor library (modeled on Tensor Considered Harmful) that focuses on improving the legibility and compositionality of deep learning code while still maintaining efficiency and scalability. Levanter is a library for training foundation models built on top of Haliax. In addition to the goals of legibility, efficiency, and scalability, Levanter further strives for bitwise reproducibility, meaning that the same code with the same data will produce the exact same result, even in the presence of preemption and restarting from checkpoints.

GPT4All is an ecosystem to train and deploy powerful and customized large language models that run locally on consumer grade CPUs.

Learn more in the documentation.

The goal is simple - be the best instruction tuned assistant-style language model that any person or enterprise can freely use, distribute and build on.

A GPT4All model is a 3GB - 8GB file that you can download and plug into the GPT4All open-source ecosystem software. Nomic AI supports and maintains this software ecosystem to enforce quality and security alongside spearheading the effort to allow any person or enterprise to easily train and deploy their own on-edge large language models.

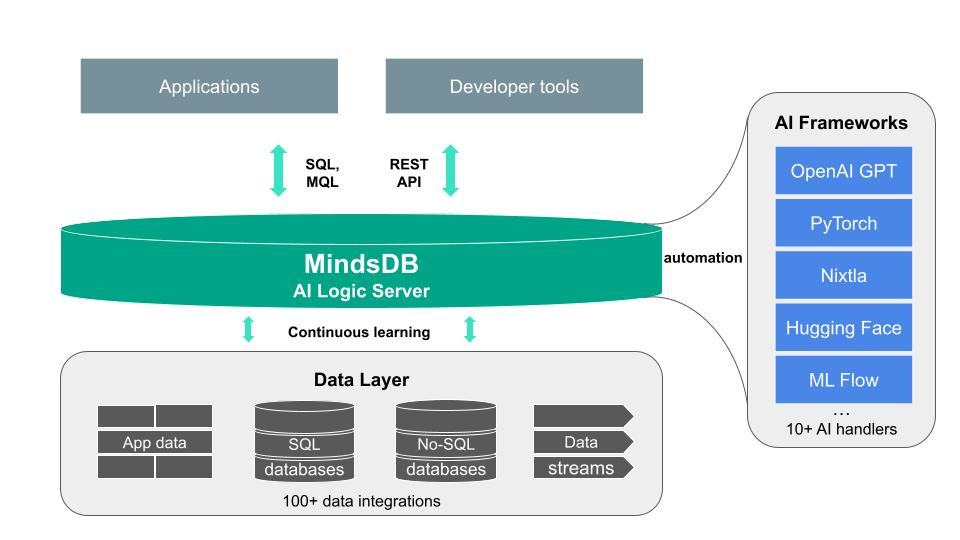

MindsDB is a Server for Artificial Intelligence Logic, enabling developers to ship AI powered projects from prototyping & experimentation to production in a fast & scalable way.

They do this by abstracting Generative AI, Large Language and other Models as a virtual tables (AI Tables) on top of enterprise databases. This increases accessibility with organizations and enables development teams to use their existing skills to build applications powered by AI.

By taking a data-centric approach to AI MindsDB brings the process closer to the source of the data minimizing the need to build and maintain data pipelines and ETL’ing, speeding up the time to deployment and reducing complexity.

Personalized Segment Anything Model (SAM), termed as PerSAM. Given only a single image with a reference mask, PerSAM can segment specific visual concepts, e.g., your pet dog, within other images or videos without any training. For better performance, we further present an efficient one-shot fine-tuning variant, PerSAM-F. We freeze the entire SAM and introduce two learnable mask weights, which only trains 2 parameters within 10 seconds.