How to train your decision making agents

MiniTorch, TensorRT's new release, fine-tune your GPT-3 model

Happy new years!

I changed the order of the sections, libraries are now the top-most section based on feedback.

I will also bring back the papers section that were cut off around October this year under the title of “Paper of the Week”.

If you have any other ideas, suggestions, feel free to respond to this email.

I am also hiring a number of different positions(ML engineers) in Seattle and Menlo Park at Meta, shoot me an email at vbugra@fb.com if you are interested in.

Without further ado, let’s dive in!

Bugra

Libraries

HuggingFace released Data Measurements Tool that is an interactive interface and open-source library that lets dataset creators and users automatically calculate metrics that are meaningful and useful for responsible data development. A good example is here.

Minitorch is a library for teaching PyTorch. It is diy teaching library for machine learning engineers who wish to learn about the internal concepts underlying deep learning systems. It is a pure Python re-implementation of the Torch API designed to be simple, easy-to-read, tested, and incremental.

PandasTutor is a visualization library/tool that allows you to visualize operations executed against Pandas dataframes.

Training Bert on academic budget is a library that allows you to train a masked BERT model for 24 hours on a single machine.

Articles

Gradient published an article on how to decide various training approaches to build intelligent agents in this blog post.

TLDR; they come up with a 5 different methods to build rewarding mechanisms. The researchers are trying to go more granular to various rewarding mechanisms to distinguish different types of feedbacks.

Evaluation

instantaneous and frequent

Humans need to be good at judging the skill on task rather than them being the expert in the task.

Preference

The learning agent presents two of its learned behavior trajectories to the human trainer, and the human tells the agent which trajectory is preferable. The agent will then try to accomplish each goal by performing a sequence of low-level actions.

Goals

In hierarchical imitation, the idea is to have the human trainer specify high-level goals.

Attention

Learning to attend can help select important state features in high-dimensional state space, and help the agent infer the target or goal of an observed action by the human teachers.

Demonstrations without action labels

The setting is very much like standard imitation learning, except the agent does not have access to labels for the actions demonstrated by the human trainer.

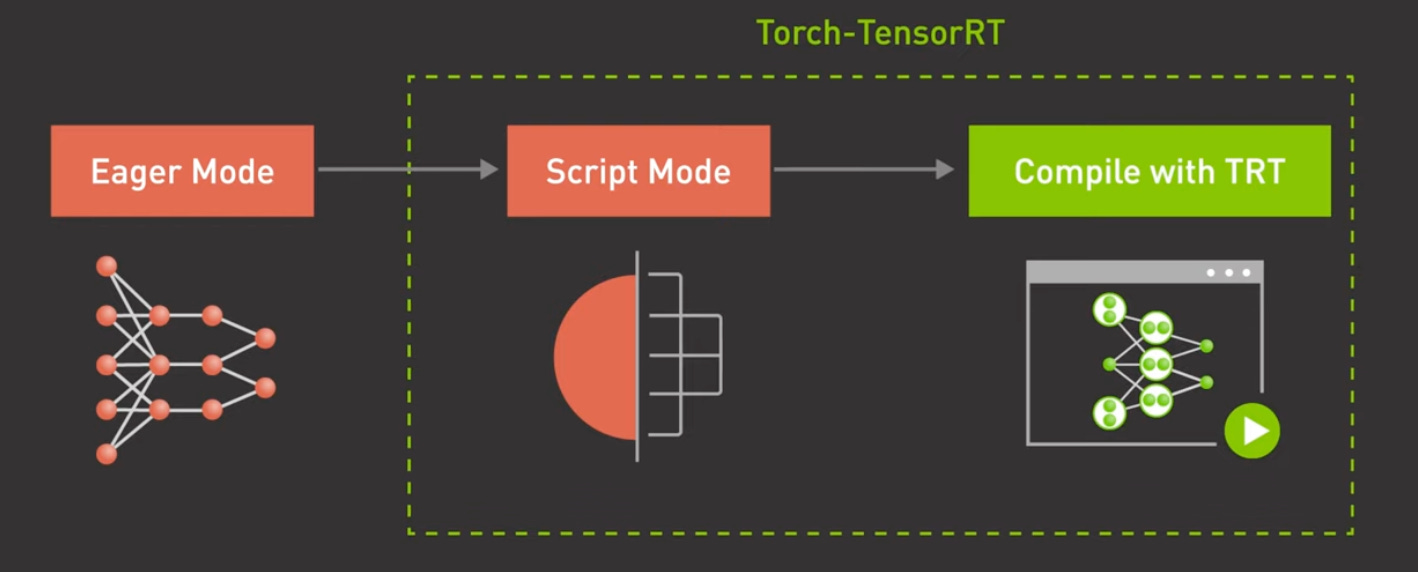

NVIDIA launched TensorRT’s new version through this blog post.

TensorRT provides a compilation method for Tensorflow and PyTorch especially in production use cases and responds to customers that want to make their serving infrastructure more efficient. This assumes that you use NVIDIA GPUs in serving layer as TensorRT’s lowered models can only be deployed to NVIDIA’s GPUs as of now.

This blog post is a informative step by step post where it talks about how to debug performance problems in machine learning workflows. If you are using NVIDIA GPU, it also explains how to use Nvidia’s Nsight tool to find out these various bottlenecks.

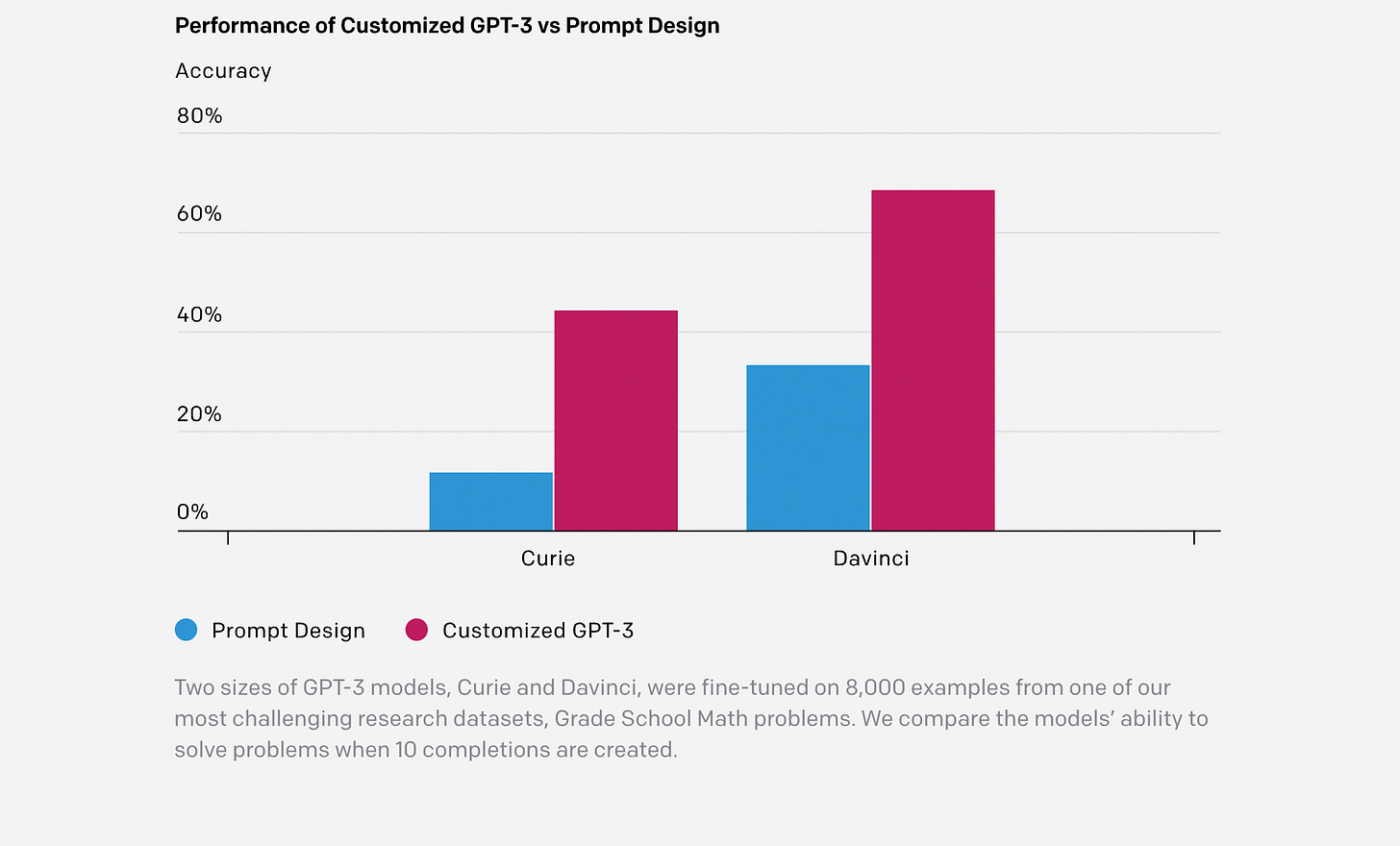

OpenAI releases a Python package for their GPT-3 model that allows fine-tuning in this post.

Conferences

Cross-Model Compatibility aims at introducing the problem of cross model compatibility in computer vision to the community. Compatibility arises when multiple models are used to solve a similar problem, which is common in machine learning services and systems.

Most of the videos in this tutorial are available in Youtube.