Google Research, 2022 & Beyond Series

Google's understand how to build better UIs through Spotlight, Arxiv Integrates HuggingFace for demos section

We are heavy Google Research posts this week, enjoy specifically the 2022 & Beyond series!

Articles

Google Research, 2022 & Beyond Series has a number of amazing blog posts:

Natural Sciences: https://ai.googleblog.com/2023/02/google-research-2022-beyond-natural.html

Language, vision and generative models: https://ai.googleblog.com/2023/01/google-research-2022-beyond-language.html

Algorithms for efficient deep learning: https://ai.googleblog.com/2023/02/google-research-2022-beyond-algorithms.html

Responsible AI: https://ai.googleblog.com/2023/01/google-research-2022-beyond-responsible.html

Algorithmic advances: https://ai.googleblog.com/2023/02/google-research-2022-beyond-algorithmic.html

Health: https://ai.googleblog.com/2023/02/google-research-2022-beyond-health.html

ML & computer systems: https://ai.googleblog.com/2023/02/google-research-2022-beyond-ml-computer.html

Robotics: https://ai.googleblog.com/2023/02/google-research-2022-beyond-robotics.html

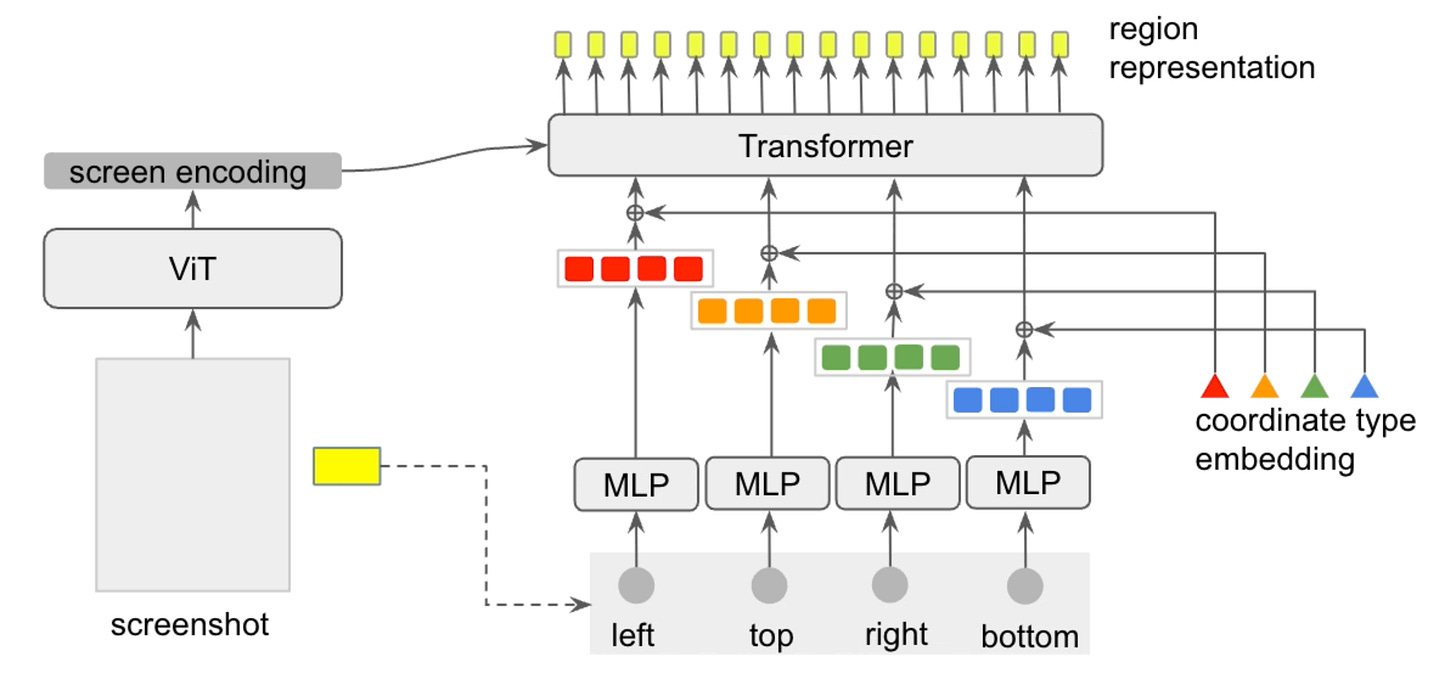

Google presents a vision-only approach that aims to achieve general UI understanding completely from raw pixels. They introduce a unified approach to represent diverse UI tasks, the information for which can be universally represented by two core modalities: vision and language. The vision modality captures what a person would see from a UI screen, and the language modality can be natural language or any token sequences related to the task. They demonstrate Spotlight model does well for tasks including widget captioning, screen summarization, command grounding and tappability prediction.

Arxiv integrates Hugging Face Spaces through a Demo tab that includes links to demos created by the community or the authors themselves. By going to the Demos tab of the paper in the arXiv categories of computer science, statistics, or electrical engineering and systems science, open source demos can be observed from the HF Spaces. Bert paper has demos from HF spaces and Replicate.

Libraries

MLCommons Algorithmic Efficiency is a benchmark and competition measuring neural network training speedups due to algorithmic improvements in both training algorithms and models. The GitHub repository holds the competition rules and the benchmark code to run it.

Paella, a novel text-to-image model requiring less than 10 steps to sample high-fidelity images, using a speed-optimized architecture allowing to sample a single image in less than 500 ms, while having 573M parameters. The model operates on a compressed & quantized latent space, it is conditioned on CLIP embeddings and uses an improved sampling function over previous works. Aside from text-conditional image generation, our model is able to do latent space interpolation and image manipulations such as inpainting, outpainting, and structural editing.

genv lets you easily control, configure and monitor the GPU resources that you are using.

The merlin-dataloader lets you quickly train recommender models for TensorFlow, PyTorch and JAX. It eliminates the biggest bottleneck in training recommender models, by providing GPU optimized dataloaders that read data directly into the GPU, and then do a 0-copy transfer to TensorFlow and PyTorch using dlpack.

While deep learning models have replaced hand-designed features across many domains, these models are still trained with hand-designed optimizers. In this work, we leverage the same scaling approach behind the success of deep learning to learn versatile optimizers. We train an optimizer for deep learning which is itself a small neural network that ingests gradients and outputs parameter updates. Meta-trained with approximately four thousand TPU-months of compute on a wide variety of optimization tasks, our optimizer not only exhibits compelling performance, but optimizes in interesting and unexpected ways. It requires no hyperparameter tuning, instead automatically adapting to the specifics of the problem being optimized. VeLO is a learned optimizer: instead of updating parameters with SGD or Adam, it was meta-learned on thousands of deep learning tasks.

tsai is an open-source deep learning package built on top of Pytorch & fastai focused on state-of-the-art techniques for time series tasks like classification, regression, forecasting, imputation