Libraries

Stanza is NLP library from Stanford that can do a variety of tasks and it provides collection of accurate and efficient tools for the linguistic analysis of many human languages.

Circuit Training is an open-source framework for generating chip floor plans with distributed deep reinforcement learning.

Mistral is a framework for transparent and accessible large-scale language model training, built with Hugging Face 🤗 . It also Includes tools and helpful scripts for incorporating new pre-training datasets, various schemes for single node and distributed training and importantly, scripts for evaluation.

Articles

OpenAI developed another model(neural theorem prover) that can solve Math Olympiad Problems. This blog post shows some examples that it can prove some of the theorems.

DeepMind builds a transformer based sequence to sequence model to solve competitive programming problems. Note that this is different than most of the other models where it actually tries to build performant and efficient solutions rather than “a solution”. It uses multi-query attention and a number of neat tricks to build 41B parameters transformer, explained much more in the post and the paper.

You can play with the model in here for a number of different problems.

OpenAI published a post where they provided a number of different model APIs for embedding generation. Three families of embedding models for different functionalities: text search, text similarity and code search. Each family includes up to four models on a spectrum of capability:

Ada (1024 dimensions),

Babbage (2048 dimensions),

Curie (4096 dimensions),

Davinci (12288 dimensions).

where Davinci is the most advanced and successful in terms of predictive accuracy for a number of evaluation tasks, the downside is that it is more expensive and computationally slow.

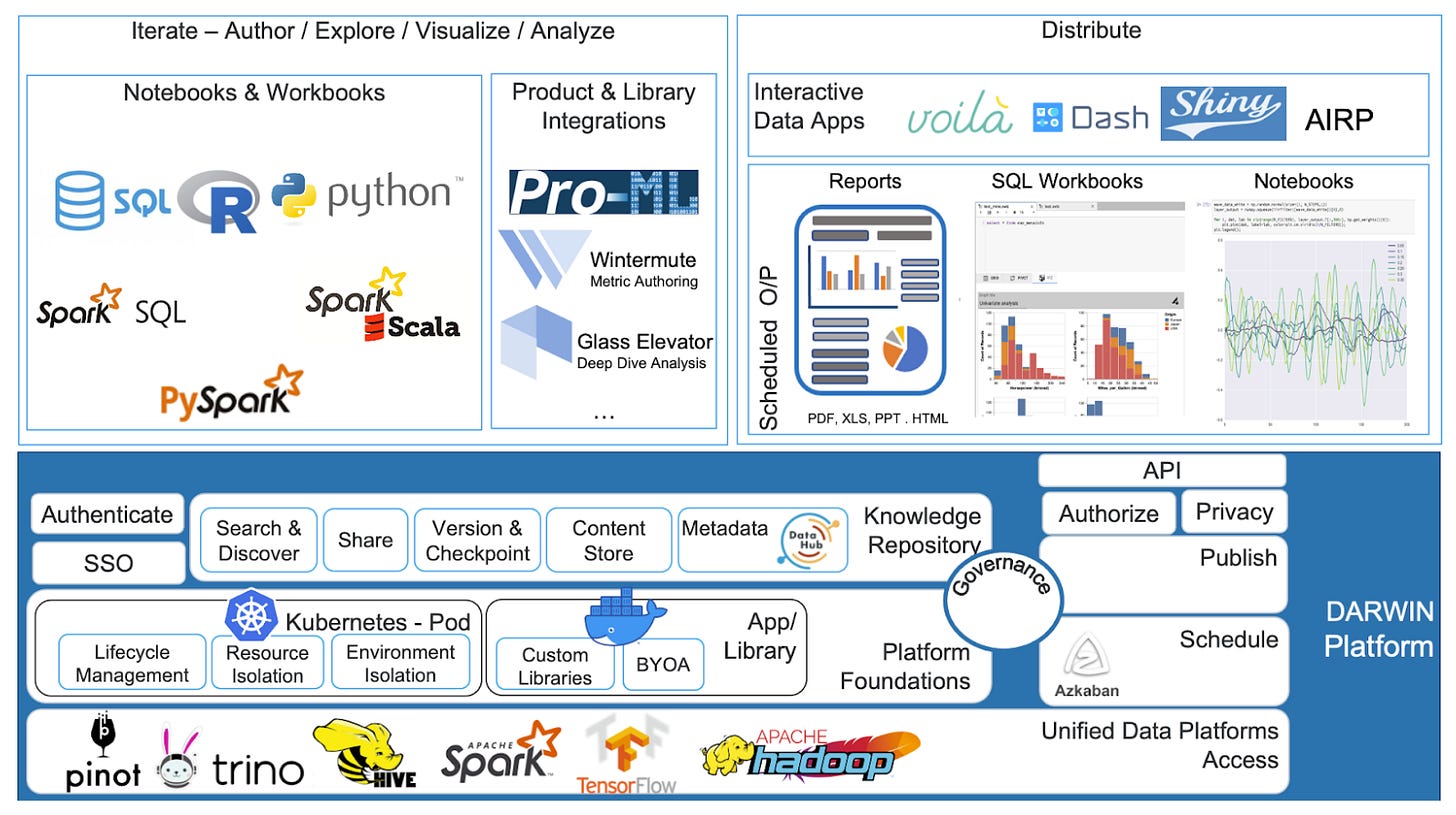

Linkedin wrote about their ML Platform stack in this blog post. The use a number of different open-source tools for their platforms; Docker, Kubernetes, Pinot and Azkaban.

Sebastian Ruder wrote an excellent writeup for ML and NLP research in 2021 in this blog post.

It covers the following sections in detail:

I especially liked Beyond the Transformer and Efficient Methods.