CVPR and ICLR Keynote videos

Augly and Kats are amazing libraries and be sure to check out Hubert paper!

There was CVPR last week and we have a number of talks/tutorials from CVPR at the end of the newsletter, if you are interested in deep learning/machine learning applications in vision domain, do check it out.

In ICLR, there was a keynote on Geometric Learning video, that is a must watch one in this week’s videos. If you need to watch only a video, you should watch that. Also, Karpathy’s talk on how Tesla is building their self-driving capabilities is a great watch, too.

Substack is telling me I am getting close to the words limit, so without furher ado, enjoy this week’s newsletter!

Bugra

Articles

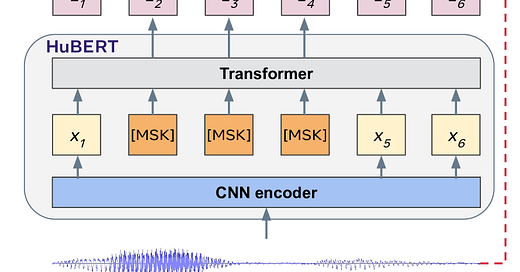

Facebook published a new article that shows how a self-supervised model can be used to do speech recognition called Hubert. As shown above, the one way to encode the speech is through a CNN layer followed by a transformer layer and on the other side, there is K-Means clustering that encodes the speech into discrete targets to understand and correspond to the targets that have been learned through Transformer. All of the transformer models are available in HuggingFace Hub.

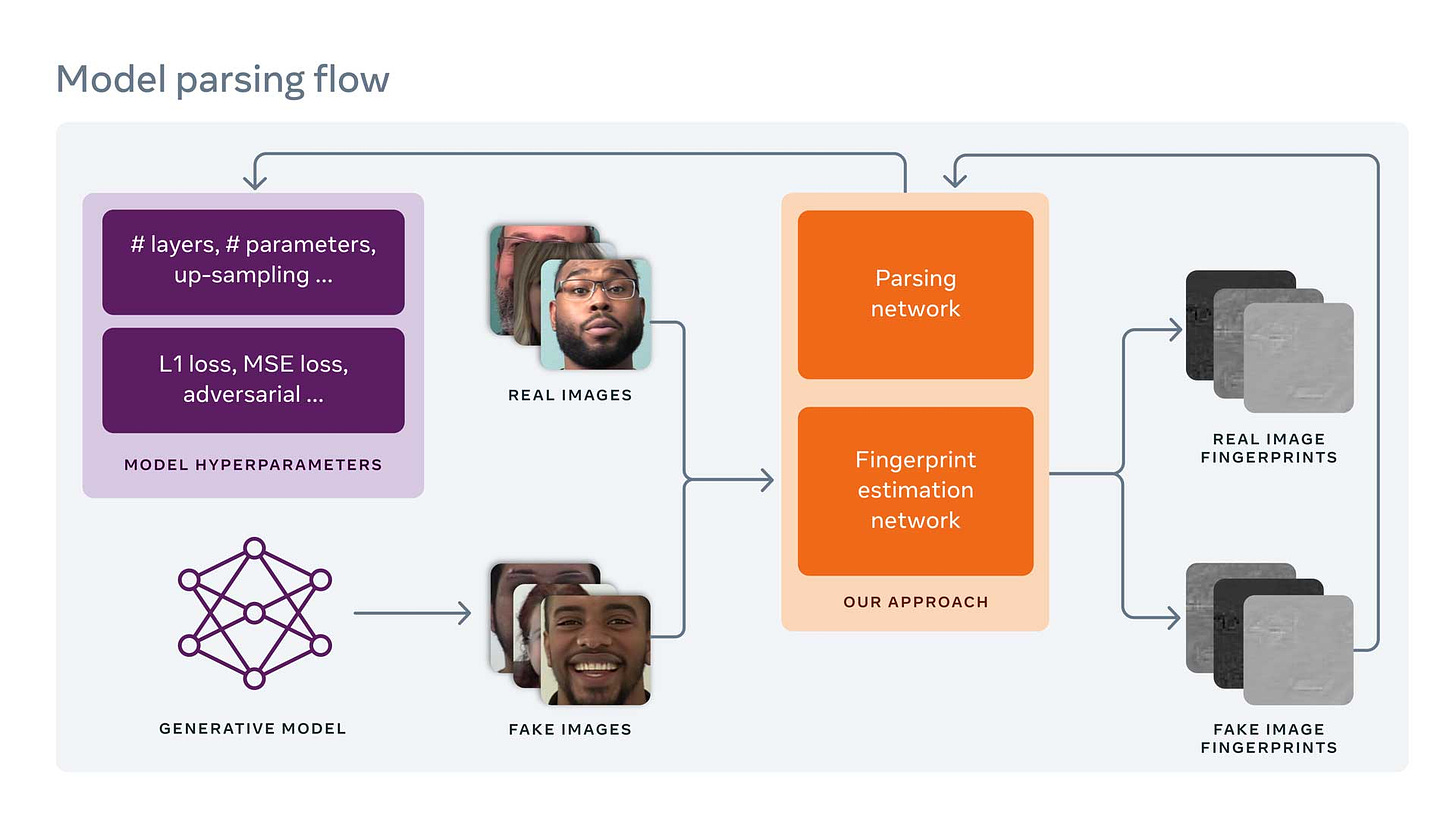

Facebook wrote a post to create fingerprints on the images and detecting fake images through a comparison of this fingerprint from all of the images.

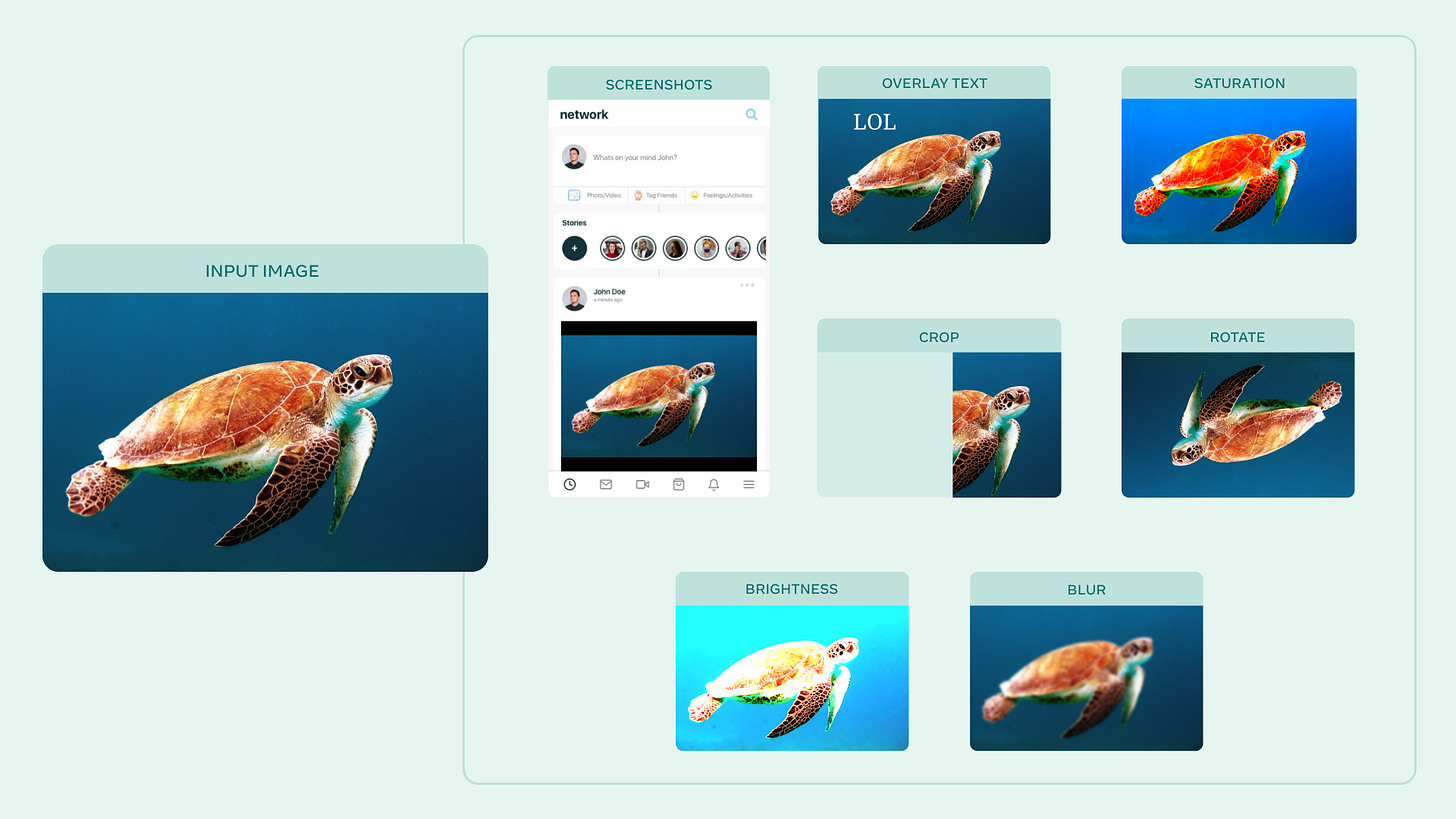

Facebook open-sourced a library Augly for data augmentation in Python. It is available in here.

Romain Tavernard wrote an introduction to Dynamic Time Warping. It is an introductory post that gives mathematical background and then goes to implementation afterwards.

Jakub Tomczak wrote a post on introduction to neural compression. It starts with basic compression schemes like JPEG and then introduce neural network based compression.

Facebook AI wrote their 5 pillars for Responsible AI.

Papers

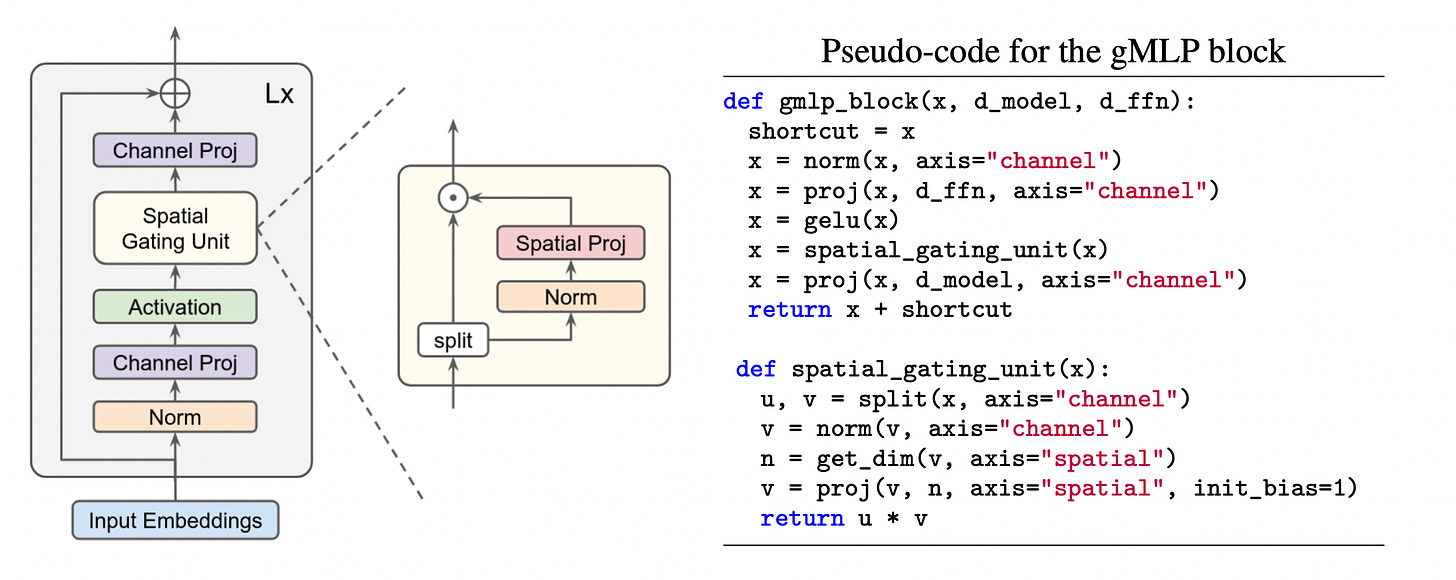

Pay Attention to MLPs is yet another paper that tries to rival attention based transformers by adopting simple MLP(Multi-layer Perceptron). In this case, they are proposing a spatial gating unit and claiming that the positional encodings are not as important.

Labml has a nice section for machine learning papers, they also retrieve twits around paper in a single page which makes it easy to skim over and then drill down on the ones that you are interested in. The paper mentioned above is here.

Courses

Kaggle has a new updated page for courses that cover a lot of introductory classes.

HuggingFace created a new class around their set of libraries and how they can be used to solve various machine learning problems. To nobody’s surprise, main theme in the course is transformers. They support both PyTorch and Tensorflow versions.

Tutorials & Workshops

CVPR 2021 Leave those nets alone(self-supervised learning) is available which covers a number of advancements in self-supervised learning area. There is an accompanying website that has different sections as well.

CVPR 2021 Computational Photography has a great set of talks/tutorials on computational photography and all of the research advancements. One of the talks on denoising has slides is available in here.

Energy based learning from Yann Lecun is a good tutorial/session for energy based learning in the domain of self-supervised learning. The slides is also available in here. There is a similar session in here as well.

Tensorflow published a good tutorial on introduction to graphical neural networks in here.

Interpretable Vision has a great set of tutorials available in here. It covers both model explainability and vision use cases.

Open World Vision covers how vision datasets and vision algorithms can be enabled for real world use cases.

Sight and Sound in CVPR 2021 covers both audio and vision applications and its intersection as well. Main website is here.

Learning from Unlabeled Videos cover self-supervised learning methods for video applications(action recognition, detection, etc).

All about Self-Driving in CVPR 2021 covers everything related to self-driving. The first part and second part are all available.

Books

Principles of Deep Learning Theory is a free book that explains mathematical understandings of neural networks. The accompanying blog post is also here.

Libraries

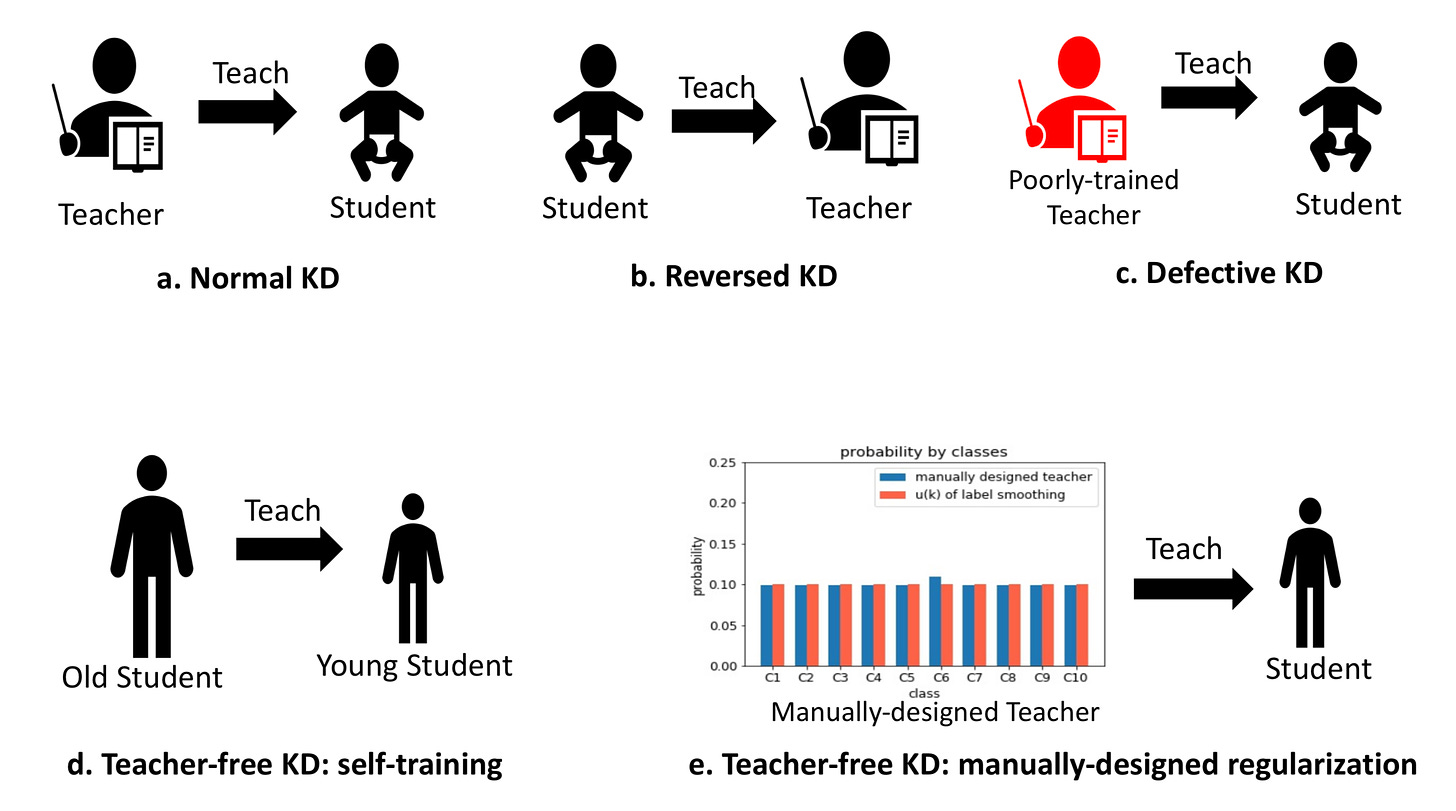

Teacher free Knowledge Distillation that shows when a neural network is either too large or too complex to distill the teacher knowledge, the regularization can be used to do a similar mechanism to accomplish a similar task.

AutoGL is a autoML solution on PyTorch for graph neural networks and graphs more broadly from Tsinghua University.

WebDataSet is a dataset implementation in PyTorch which allows efficient data consumption in the training loops.

Qdrant is a vector similarity search library with filtering support. Along with a number of new companies like Jina.ai and other libraries like FAISS, this area is still seeing new entrances which is great. Vector similarity and search on the embeddings is far from a well-solved problem and it will take many years to reach maturity that current databases enjoy.

Facebook open-sourced Kats which targets everything time series related to do forecasting, outlier/anomaly detection and regression detection. They have a number of good notebooks that shows some of these functionalities.

Fairness Indicators is an open source library from Google that can be part of Tensorflow Extended(TFX) to enable metrics reporting for model evaluation around various fairness metrics for classification tasks(binary/multiclass).

Notebooks

HuggingFace has a notebook that shows how you can train a transformer using JAX on GCP through TPU(Tensor Processing Unit).

Gradient Centralization for Better Training Performance is a nice tutorial/notebook that shows how to do gradient centralization. There is a paper that shows how to do gradient centralization to increase training performance.

DETR(Detection Transformer) has a nice notebook on how you can apply a pretrained model.

Fine-tuning Vision Transformers has a notebook which explains how to retrain a model from a pretrained model for Vision Transformers.

A good notebook that shows Graph Neural Networks in PyTorch is available in here.

Videos

ICLR 2021 Keynote for Geometric Deep Learning is a must to watch:

The paper is available in here and there is also another accompanying post is in here. The website that has all of these information and links is here.

Andrej Karpathy gave a very interesting talk in self-driving:

Mark Riedl gave a talk on creativity in CVPR as well:

All of the ICLR 2021 videos became available: https://slideslive.com/iclr-2021