Apple releases a new deep learning library: MLX

Google releases StyleDrop, Terence Tao suggests we should embrace GenAI's imperfections

Finally, Apple also releases a deep learning neural network library called MLX, the API resembles quite a bit to PyTorch and Keras and almost you can use drop in modules to rewrite your code against MLX if you have a PyTorch model. The advantage of this library over others, it can optimize the neural network model against Apple silicon separately and able to optimize the low level details for HW. They have a number of different examples and I strongly recommend you to check it out:

A sample Transformer model looks like in the following:

class TransformerLM(nn.Module):

def __init__(self,

vocab_size: int,

num_layers: int,

dims: int,

num_heads: int):

super().__init__()

self.embedding = nn.Embedding(vocab_size, dims)

self.transformer = nn.TransformerEncoder(num_layers, dims, num_heads)

self.out_proj = nn.Linear(dims, vocab_size)

def __call__(self, x):

mask = nn.MultiHeadAttention.create_additive_causal_mask(x.shape[1])

x = self.embedding(x)

x = self.transformer(x, mask)

return self.out_proj(x)

def loss(self, x, y, reduce=True):

logits = self(x)

losses = nn.losses.cross_entropy(logits, y)

mx.simplify(losses)

return mx.mean(losses) if reduce else mx.mean(losses, axis=(-1, -2))

The imports are also very similar to PyTorch:

import mlx.core as mx

import mlx.nn as nn

import mlx.optimizers as optim

It is available in pip and you can install the library through `pip install mlx`.

Articles

Google published a blog post on how they are using ML to advance ML technologies mostly in the ML system design.

They cover mainly 3 areas:

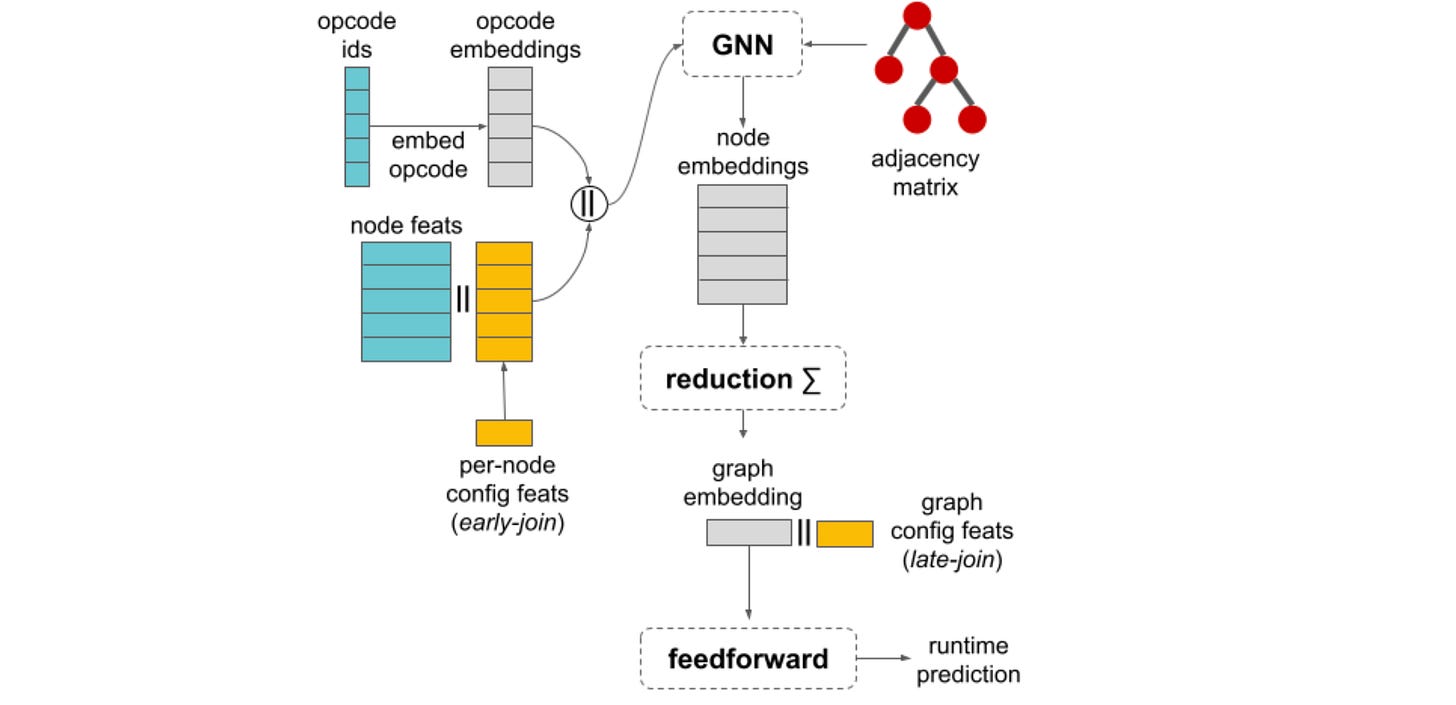

Compiler Optimization: One way to improve efficiency is to use a machine learning compiler to optimize the code for a specific hardware platform. However, traditional compilers often rely on heuristics that can lead to suboptimal performance.

Performance Estimation of ML Flows: They introduce a new dataset called TpuGraphs, which is designed to help train machine learning models to predict the performance of other machine learning programs. The dataset includes a variety of different machine learning programs, as well as the execution times for those programs on different hardware platforms. This is a very interesting idea as it can optimize the existing ML training loops in a way that some of the heuristics and rule based approach cannot improve upon.

Better Graph Optimization: The authors also present a new method called Graph Segment Training, which can be used to train machine learning models on large graphs. This method can be very useful because many machine learning programs can be represented as graphs with all of the data and training loops itself.

Google wrote a post on StyleDrop which is a new text-to-image generation method, allows users to produce high-quality images in a wide range of styles.

It has the following properties:

Versatile: Styledrop can generate images in a wide range of styles, from photorealistic to painter.

Diverse: It can create images of different sizes, enabling users to generate everything from small icons to large artwork.

Fast: Styledrop is remarkably fast, making it convenient for users to experiment with different styles.

Versatile: It supports a variety of image formats, including JPEG, PNG, and GIF.

It has main four components; Text Encoder, Style Encoder, Diffusion Model and Latent Diffusion. Text encoder converts text prompts into numerical representations. Style encoder encodes the desired style from an example image. Diffussion model iteratively generates images based o the encoded text and style. Latent Diffusion allows the image to be gradually refined while maintaining coherence with the text and style.

More styles and different prompts can be found in the project’s website as well.

Terence Tao, a very famous mathematician, wrote a rather interesting article in Microsoft’s blog. He discusses the impact of generative AI tools, such as GPT-4, on various aspects of our lives, including research, education, and personal interactions. He discusses that these tools will require us to recalibrate our expectations about how we interact with technology. He suggests that we should abandon the idea that AI tools will always produce perfect outputs, and instead learn to accept and even embrace their imperfections. Tao also highlights the potential benefits of using AI tools in creative and collaborative ways.

Libraries

Machine learning approaches are often trained and evaluated with datasets that require a clear separation between positive and negative examples. This approach overly simplifies the natural subjectivity present in many tasks and content items. It also obscures the inherent diversity in human perceptions and opinions. Often tasks that attempt to preserve the variance in content and diversity in humans are quite expensive and laborious. To fill in this gap and facilitate more in-depth model performance analyses Google publishes the DICES dataset - a unique dataset with diverse perspectives on safety of AI generated conversations.

Apple releases a new loss based on attention which is to solve the challenge of transformers being difficult to train. They study the training stability of Transformers by proposing a novel lense named

Attention Entropy Collapse. Training instability often occurs in conjunction with sharp decreases of the average attention entropy, and they denote this phenomenon as entropy collapse. If you are training transformers and have a lot of instability issues, it is worthwhile to check it out.

AI Tomago 🥚🐣

An 100% local, LLM-generated and driven virtual pet with thoughts, feelings and feedback. Revive your fond memories of Tamagotchi!

DoWhy provides a wide variety of algorithms for effect estimation, prediction, quantification of causal influences, diagnosis of causal structures, root cause analysis, interventions and counterfactuals. A key feature of DoWhy is its refutation and falsification API that can test causal assumptions for any estimation method, thus making inference more robust and accessible to non-experts.

DoWhy supports the following causal tasks:

Effect estimation (identification, average causal effect, conditional average causal effect, instrumental variables and more)

Quantify causal influences (mediation analysis, direct arrow strength, intrinsic causal influence)

What-if analysis (generate samples from interventional distribution, estimate counterfactuals)

Root cause analysis and explanations (attribute anomalies to their causes, find causes for changes in distributions, estimate feature relevance and more)