A new Giant LLM from Inflection(5K H100 GPUs)

Regulation of Foundation Models, Evaluation of Foundation Models

Articles

Inflection announced releasing a new giant LLM call called inflection-2:

Inflection-2 was trained on 5,000 NVIDIA H100 GPUs in fp8 mixed precision for ~10²⁵ FLOPs. This puts it into the same training compute class as Google’s flagship PaLM 2 Large model, which Inflection-2 outperforms on the majority of the standard AI performance benchmarks, including the well known MMLU, TriviaQA, HellaSwag & GSM8k.

I talked a bit about HW dimension of the moat from Foundation Model in the following post:

OpenAI Keynote and Some Thoughts on Foundation Models

OpenAI Keynote was one of the biggest news in AI world and if you are not living under rock, you must have heard or hopefully watched it. If you have not, I recommend watching it:

This post only proves to make a differentiated outcome in the model is actually throwing more HW and using 625 H100 machines or 5K GPUs for the model. This also will show more and more, AI will be a compute/HW play rather than software as most of the tasks and model performance accuracy looks like to be linearly correlated with the compute that is being spent on the model itself. If this trend continues, I would think that the HW that will unlock the better compute for a given MW investment would be the most profitable among all of the dimensions(HW, modeling and data).

Still inflection 2 has a long way to go to reach GPT-4 execution speed as GPT-4 still leads most of the metrics in different tasks and dimensions of evaluation.

Stanford researchers wrote an article to respond on governments to regulate "foundation models," highly capable AI models that are influential across various sectors. It highlights initiatives by the US, UK, G7, China, and the EU to govern these models due to concerns about their potential for harm, including issues like child sexual abuse material, disinformation, and market concentration.

The concept of tiers for regulation emerges as a solution, with proposals to categorize models based on their impact on society, resources used, or evaluations of capabilities and risks. However, determining these tiers poses challenges due to the complexity of foundation models, their diverse modalities, and different release strategies.

Basis for Tiering:

Impact on Society: Some proposals suggest tiers based on the demonstrated impact of these models on society. This could include measuring the number of downstream applications using the models, their breadth of influence, or their impact on specific societal domains.

Resources Used: Another approach considers the resources utilized in building the models, such as computational power (FLOPs - Floating Point Operations per Second), amount of data used, or model performance benchmarks.

Evaluations and Risks: Tiers might be established based on evaluations of the model's capabilities, risks, and safety parameters. However, current evaluation frameworks are still evolving and may not be comprehensive enough for robust tiering.

Complexity and Modalities:

Modalities: Foundation models vary in their input-output modalities (text-to-text, text-to-image, multimodal, etc.), complicating uniform thresholds for tiers.

Release Strategies: The way models are released (open-source, through APIs, fully closed, etc.) also influences their impact and complicates tier delineation.

Challenges and Considerations:

Compute-Based Tiers: Using computational power alone as a basis for tiers is cautioned against due to its limited correlation with societal impact and the diverse nature of models across different modalities.

Demonstrated Impact: While prioritizing impact for tiering seems logical, accurately measuring this impact remains a challenge. Governments lack mechanisms to track downstream usage and societal effects of these models effectively.

Hybrid Approaches and Adaptability:

Hybrid Strategies: Combining multiple criteria or tiering approaches might create a more comprehensive regulatory framework, balancing different factors for tier delineation.

Adaptability: Considering the rapid evolution of AI technology, tiers need to be regularly updated to remain effective and relevant. Agencies should be empowered to revise tiers and incorporate new technical expertise.

Need for Consultation:

Stakeholder Involvement: Engaging various stakeholders, including civil society, academia, and industry, is crucial for developing regulations that serve public interests effectively and credibly.

The post rejects simplistic approaches like basing tiers solely on computational power due to its limited correlation with societal impact. Instead, it suggests evaluating models based on demonstrated impact, like the number of downstream applications using these models, although accurately measuring this impact remains challenging.

A proposal involves creating public databases to track the usage and impact of foundation models. The EU's AI Act may incorporate a requirement for registration, linking high-risk AI systems to the foundation models they depend on, providing a potential framework for assessing impact.

It also encourages hybrid approaches that combine multiple tiering strategies for a more comprehensive regulatory framework. It emphasizes the need for consultation with various stakeholders to establish regulations that serve the public interest effectively.

Sequio wrote an article on some of the challenges that new AI brings to the industry. They evaluate some of the challenges through a dichotomy. It delves into the dual nature of AI, discussing its potential and the challenges it presents.

AI's Potential vs. Reality:

AI presents an ambitious promise of transforming industries, optimizing processes, and revolutionizing how businesses operate. However, the translation of this promise into reality often faces hurdles. Challenges in deployment, scalability, and the quality of available data can limit AI's effectiveness. Businesses encounter obstacles in implementing AI solutions due to complexities in integration, the need for specialized talent, and concerns regarding the tangible return on investment.

Ethical and Societal Implications:

AI algorithms can inadvertently perpetuate biases present in the data they are trained on, leading to unfair outcomes in decision-making processes. The ethical considerations around AI extend to privacy concerns arising from the collection and utilization of vast amounts of data. There's a growing need for frameworks and practices that ensure fairness, transparency, and accountability in AI systems to mitigate biases and address privacy concerns.

AI's Role in Decision-Making:

The discussion around AI often revolves around striking a balance between augmenting human decision-making capabilities and the potential for full automation. Transparency in AI systems is crucial, allowing humans to understand, interpret, and trust the decisions made by algorithms. This transparency ensures accountability and facilitates collaboration between human and machine intelligence.

Business Implications:

While businesses acknowledge the potential of AI to drive innovation and efficiency, integrating AI into existing operations poses challenges. Many companies grapple with the complexities of implementation, including the need for specialized talent, initial costs, and uncertainties around the immediate return on investment. Taking a long-term strategic view becomes imperative, considering AI adoption as a transformative journey rather than expecting immediate and visible returns.

Regulatory Landscape:

The evolving regulatory landscape surrounding AI introduces both challenges and opportunities for businesses. The regulations aim to address concerns related to data privacy, algorithmic transparency, and ethical guidelines. Navigating these regulations while leveraging AI's potential requires a proactive approach from businesses to ensure compliance and ethical use of AI technologies.

Collaboration and Education:

Industry collaboration plays a pivotal role in addressing common challenges faced in AI development. Sharing best practices, insights, and resources enables a collective effort to advance responsible AI. Furthermore, investing in education and awareness programs for businesses, policymakers, and the general public is crucial. These initiatives foster a better understanding of AI's capabilities, limitations, and ethical considerations, promoting its responsible and beneficial use.

Libraries

C++ implementation of ChatGLM-6B, ChatGLM2-6B, ChatGLM3-6B and more LLMs for real-time chatting on your MacBook.

This repo includes a reference implementation of the DPO algorithm for training language models from preference data, as described in the paper Direct Preference Optimization: Your Language Model is Secretly a Reward Model.

🔨ToolLLM aims to construct open-source, large-scale, high-quality instruction tuning SFT data to facilitate the construction of powerful LLMs with general tool-use capability. It empowers open-source LLMs to master thousands of diverse real-world APIs through by collecting a high-quality instruction-tuning dataset. It is constructed automatically using the latest ChatGPT (gpt-3.5-turbo-16k), which is upgraded with enhanced function call capabilities. They provide the dataset, the corresponding training and evaluation scripts, and a capable model ToolLLaMA fine-tuned on ToolBench.

functime is a powerful Python library for production-ready global forecasting and time-series feature extraction on large panel datasets.

functime also comes with time-series preprocessing (box-cox, differencing etc), cross-validation splitters (expanding and sliding window), and forecast metrics (MASE, SMAPE etc). All optimized as lazy Polars transforms.

GraphStorm is a graph machine learning (GML) framework for enterprise use cases. It simplifies the development, training and deployment of GML models for industry-scale graphs by providing scalable training and inference pipelines of Graph Machine Learning (GML) models for extremely large graphs (measured in billons of nodes and edges). GraphStorm provides a collection of built-in GML models and users can train a GML model with a single command without writing any code. To help develop SOTA models, GraphStorm provides a large collection of configurations for customizing model implementations and training pipelines to improve model performance. GraphStorm also provides a programming interface to train any custom GML model in a distributed manner. Users provide their own model implementations and use GraphStorm training pipeline to scale.

DiffSeg is an unsupervised zero-shot segmentation method using attention information from a stable-diffusion model. This repo implements the main DiffSeg algorithm and addtionally include an experimental feature to add semantic labels to the masks based on a generated caption.

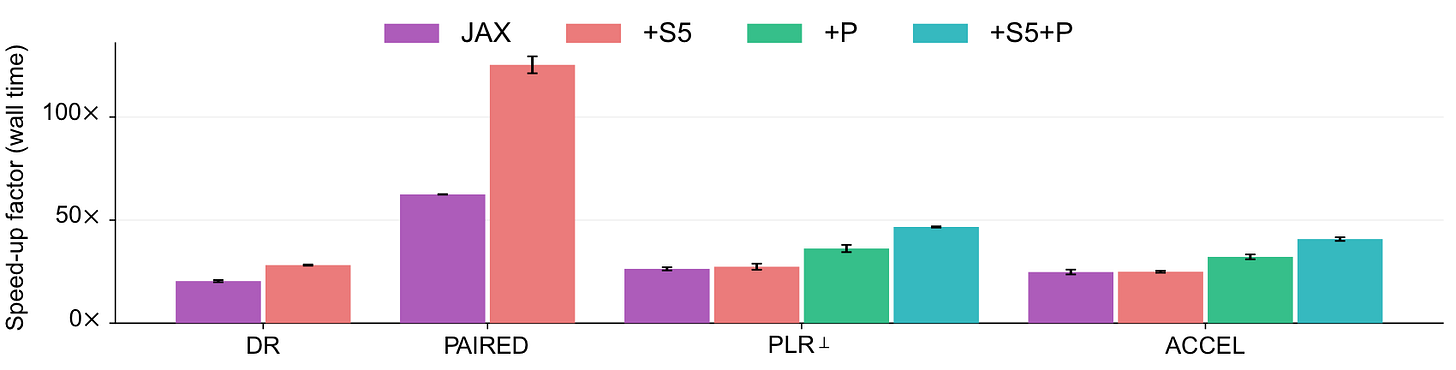

Unsupervised Environment Design (UED) is a promising approach to generating autocurricula for training robust deep reinforcement learning (RL) agents. However, existing implementations of common baselines require excessive amounts of compute. In some cases, experiments can require more than a week to complete using V100 GPUs. This long turn-around slows the rate of research progress in autocuriculum methods. minimax provides fast, JAX-based implementations of key UED baselines, which are based on the concept of minimax regret. By making use of fully-tensorized environment implementations,

minimaxbaselines are fully-jittable and thus take full advantage of the hardware acceleration offered by JAX. In timing studies done on V100 GPUs and Xeon E5-2698 v4 CPUs, we findminimaxbaselines can run over 100x faster than previous reference implementations, like those in facebookresearch/dcd.Symphony is a framework for composing interactive machine learning interfaces with task-specific, data-driven components that can be used across platforms such as computational notebooks and web dashboards. See Symphony's documentation for more information.

Videos

Andrej Karpathy released a 1 hour introduction video to LLMs:

I highly recommend watching it, very approachable and goes into details well from beginner mindset.